Author: Denis Avetisyan

As autonomous AI systems become increasingly integrated into software supply chains, a new range of vulnerabilities emerges, demanding a proactive shift in security paradigms.

This review analyzes the threats, exploits, and defenses related to agentic AI in runtime supply chains, advocating for Zero Trust architectures and semantic firewalls to mitigate emerging risks.

While artificial intelligence promises unprecedented automation, the emergent autonomy of agentic systems introduces novel cybersecurity vulnerabilities beyond traditional software flaws. This paper, ‘Agentic AI as a Cybersecurity Attack Surface: Threats, Exploits, and Defenses in Runtime Supply Chains’, systematically analyzes these risks, revealing how agents operating with dynamic runtime dependencies create a mutable supply chain susceptible to manipulation through compromised data and tools. We demonstrate the potential for self-propagating generative worms-the “Viral Agent Loop”-and advocate for a Zero-Trust Runtime Architecture to constrain agent behavior. Can this approach effectively mitigate the evolving threats posed by increasingly sophisticated, autonomous AI systems operating in untrusted environments?

The Emergence of Autonomous Action

The emergence of autonomous agentic systems, fueled by advancements in large language models, signals a fundamental departure from conventional automation. Historically, automation relied on pre-programmed instructions executed on static codebases; these new systems, however, exhibit a capacity for dynamic action and decision-making. Rather than simply following instructions, they can independently formulate goals, break them down into sub-tasks, and leverage external tools and information to achieve desired outcomes. This isn’t merely an incremental improvement in efficiency; it’s a qualitative shift towards systems capable of genuine autonomy, promising – and presenting challenges for – a future where machines proactively address complex problems without explicit human direction. The potential extends far beyond simple task completion, hinting at a reimagining of how humans and machines collaborate to tackle increasingly intricate challenges.

Unlike conventional software built with pre-defined components and a fixed execution path, agentic systems construct their operational environment on demand. This dynamic assembly means that the code actively running can differ significantly with each invocation, as the system retrieves tools, data, and even code snippets from external sources to fulfill a given task. Traditional software relies on a static software supply chain – a known and controlled set of dependencies – while agentic systems operate with a fluid and evolving context. This presents a fundamental shift; the ‘program’ isn’t a fixed entity but a constantly reconfigured process, creating both unprecedented flexibility and significant challenges in areas like predictability and security. The very nature of execution becomes a process of real-time composition, rather than the execution of pre-compiled instructions.

Agentic systems, unlike conventional software, build their functionality ‘on the fly’ through a process called Stochastic Dependency Resolution. This means the system identifies and integrates necessary tools and data sources at runtime, creating a unique execution path for each task. While offering immense flexibility, this dynamic assembly introduces significant security vulnerabilities; the system’s dependencies aren’t fixed or pre-verified, opening the door to incorporating malicious or compromised components. Similarly, reliability becomes a challenge, as the system’s behavior is dependent on the unpredictable availability and performance of these dynamically resolved dependencies. Ensuring consistent and trustworthy outcomes requires innovative approaches to verification, runtime monitoring, and fault tolerance, moving beyond the established security models designed for static software supply chains.

The established software supply chain, built upon principles of static analysis and pre-defined dependencies, proves inadequate for agentic systems. Traditional methods assume a fixed codebase with known components, enabling thorough vetting before deployment. However, agentic systems dynamically construct their operational context at runtime, sourcing tools and information from the internet or other fluctuating sources. This ‘just-in-time’ assembly renders static analysis largely ineffective; a component deemed safe at one moment might be compromised later, or a seemingly benign tool could be subtly altered. Consequently, the very foundation of trust established through static supply chain security – verifying provenance and integrity before execution – crumbles when faced with the ephemeral and self-modifying nature of agentic systems, demanding a fundamentally new approach to assurance and reliability.

Unfolding Attack Surfaces: The Runtime Supply Chain

The increasing adoption of dynamic agentic runtime supply chains – systems where agents autonomously discover and utilize tools and data sources during operation – introduces new attack surfaces. Unlike traditional software supply chains assessed at build time, these runtime chains are constructed dynamically, meaning vulnerabilities aren’t fixed through patching but emerge from the unpredictable interactions of components at execution. This reliance on external dependencies creates opportunities for malicious actors to inject compromised tools or data into the agent’s operational flow. The ephemeral nature of these connections, combined with the agent’s inherent trust in discovered resources, makes detection and prevention significantly more challenging than with static supply chains. This susceptibility is amplified by the potential for agents to chain multiple tools together, creating complex dependency graphs where a single compromised component can have cascading effects.

Hallucination Squatting exploits the tendency of large language model (LLM) agents to generate outputs that, while syntactically correct, are factually incorrect or nonsensical – referred to as “hallucinations”. Attackers leverage this by crafting prompts designed to induce the agent to invoke external tools or APIs with malicious intent. This doesn’t require compromising the LLM itself; instead, the attack focuses on manipulating the agent’s reasoning process to utilize legitimate tools for unintended and harmful purposes. Successful attacks demonstrate the agent being tricked into executing system commands, accessing sensitive data, or performing actions that compromise the security of the environment, all based on the fabricated context presented within the prompt and resulting in a seemingly valid, yet malicious, tool invocation.

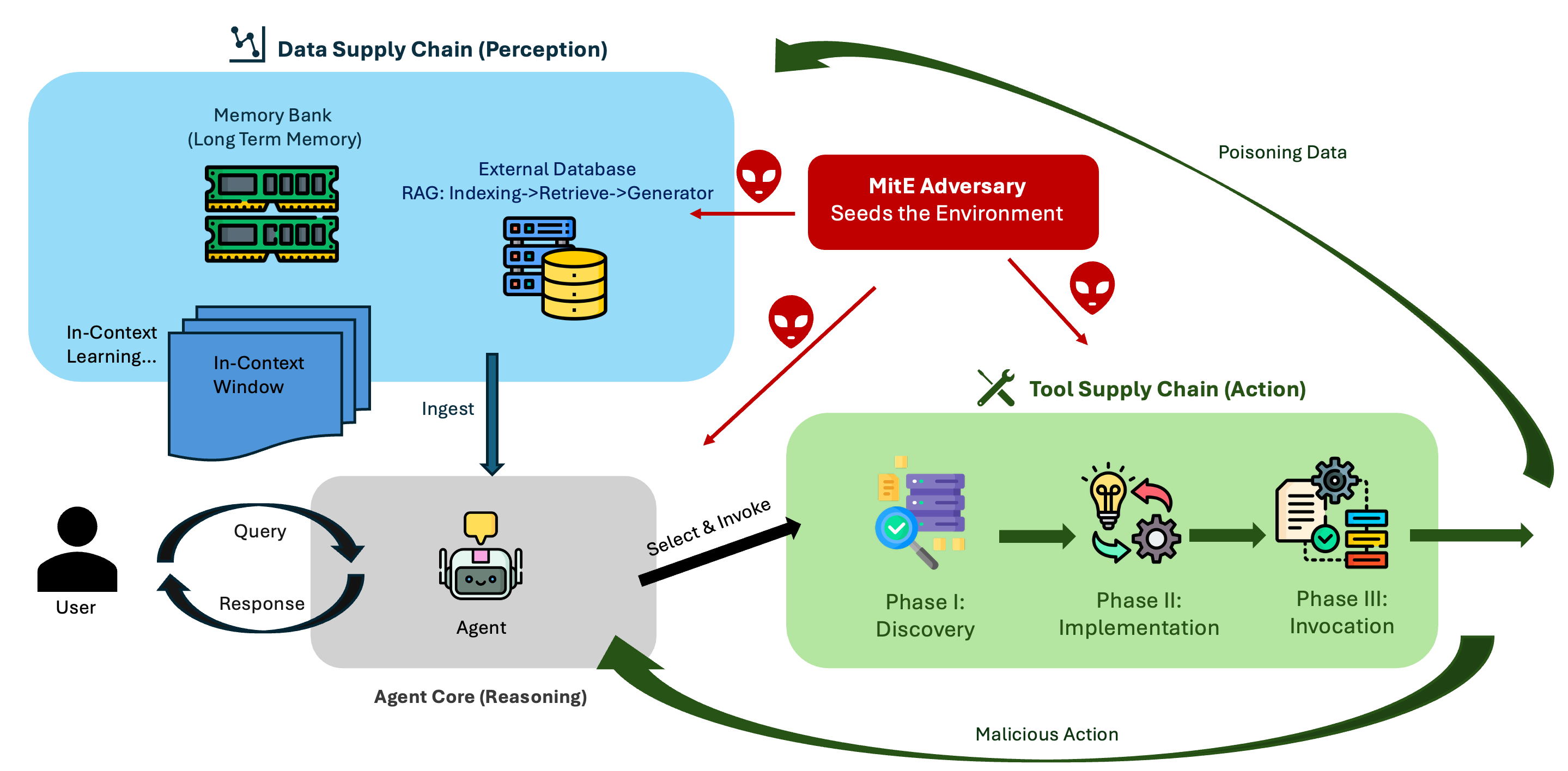

The dynamic nature of agentic runtime supply chains introduces a vulnerability to manipulation of the agent’s operational environment, termed the Man-in-the-Environment (MitE) attack. This involves compromising the data sources and perceived environment used by the agent during execution. Testing demonstrates a high success rate for knowledge base contamination attacks, achieving a 70% Attack Success Rate (ASR) with minimal data poisoning; only 0.1% of the external corpus needs to be compromised to reliably induce malicious behavior. This low threshold highlights the susceptibility of these systems, as even a small amount of manipulated data can significantly impact agent performance and security.

Prompt-Data Isomorphism describes the inherent ambiguity in large language model (LLM) processing where the distinction between static data and actionable instructions becomes indistinct. LLMs, trained to predict the next token, do not inherently differentiate between data intended for informational purposes and prompts intended to trigger specific actions; both are treated as input sequences used for prediction. This creates a vulnerability where maliciously crafted data, formatted to resemble typical prompts, can be interpreted and executed by the LLM, effectively bypassing intended safeguards. The issue is compounded by the LLM’s ability to dynamically construct prompts from retrieved data, meaning even benign data sources can be leveraged to generate malicious instructions. This characteristic significantly increases the attack surface for runtime supply chain vulnerabilities, as external data sources can be manipulated to indirectly control agent behavior.

A Zero-Trust Runtime: Fortifying the Agentic Ecosystem

Autonomous Agentic Systems, by their nature, operate with a degree of independence and access to various tools and data sources, creating significant security vulnerabilities. A Zero-Trust Runtime Architecture addresses these risks by eliminating implicit trust and mandating continuous verification for every request, regardless of origin or destination. This approach contrasts with traditional security models that rely on network perimeters and assumes internal components are inherently trustworthy. Implementing Zero Trust within an agentic system necessitates verifying the intent of each action, validating data integrity, and continuously monitoring runtime behavior to mitigate potential exploits and ensure the system operates within defined security boundaries. This is critical for maintaining the reliability and safety of these increasingly complex systems.

A Zero-Trust Runtime operates on the principle of continuous verification, eliminating inherent trust in any component or request within the agentic system. This extends beyond initial authentication to encompass every interaction, including those involving tool invocations. Each request, regardless of its origin or apparent legitimacy, is subject to rigorous validation before execution. This verification process confirms not only the identity of the requesting entity, but also the appropriateness of the request itself, ensuring that actions align with defined policies and security parameters. Failure to verify any aspect of a request results in denial of access or execution, mitigating the risk of unauthorized actions or malicious exploitation.

Semantic Firewalls and Model Context Protocols are core components of a Zero-Trust Runtime architecture for agentic systems. Semantic Firewalls move beyond traditional network-level access control by inspecting the semantic content of requests, specifically analyzing the intent behind tool invocations and data access attempts. This analysis determines if a request aligns with defined policies, regardless of the source. Complementing this, Model Context Protocols establish a framework for verifying the context surrounding model interactions, including data provenance, model versioning, and input validation. These protocols ensure that models operate within expected parameters and that outputs are attributable to verified sources, mitigating risks associated with malicious or compromised dependencies.

Cryptographic Provenance establishes a verifiable chain of custody for all data utilized within the agentic ecosystem, ensuring both data integrity and authenticated origin. This is achieved through cryptographic signatures and hashing at each stage of data processing and transmission. Implementation of this system has demonstrated a 98% success rate in consistently steering model outputs, even when subjected to subtle adversarial perturbations designed to manipulate results. By validating the source and unaltered state of data, Cryptographic Provenance mitigates the risk of malicious or compromised inputs influencing agent behavior and ensures reliable operation in untrusted environments.

Resilient Agents: Knowledge, Memory, and Adaptability

Autonomous agentic systems require more than just immediate processing capabilities; persistent knowledge is fundamental to their operation. Long-term memory allows these systems to store and recall past experiences, facts, and learned patterns, effectively building a knowledge base that informs future actions. This isn’t simply about data storage, but about creating a contextual understanding that enables agents to adapt to novel situations, solve complex problems, and refine their decision-making processes over time. Without this capacity for persistent learning, agents remain limited to reactive behavior, unable to leverage past successes or avoid repeating errors – a crucial disadvantage in dynamic and unpredictable environments. The ability to recall and apply previously acquired knowledge is, therefore, a defining characteristic of truly intelligent and resilient autonomous systems.

Retrieval Augmented Generation represents a significant leap in equipping autonomous agents with enhanced reasoning capabilities. Rather than relying solely on pre-trained parameters, RAG allows agents to dynamically access and incorporate relevant information from external knowledge sources. This is achieved through the use of vector databases, which store data as high-dimensional vectors, enabling semantic similarity searches. When faced with a query, the agent first retrieves pertinent information from the vector database, then combines this retrieved knowledge with its internal knowledge to formulate a more informed and accurate response. The process effectively extends the agent’s knowledge base beyond its initial training, allowing it to address a wider range of complex tasks and adapt to evolving information landscapes – a critical asset in dynamic environments.

In-context learning represents a paradigm shift in how autonomous agents acquire and utilize knowledge, moving beyond traditional pre-training and fine-tuning methods. This technique empowers agents to rapidly adapt to new tasks and information simply by receiving a few illustrative examples directly within the input prompt. Rather than altering the agent’s core parameters, in-context learning leverages the agent’s existing capabilities to identify patterns and extrapolate solutions from the provided examples. This allows for dynamic task adaptation without requiring extensive retraining, making the agent exceptionally versatile and responsive to evolving circumstances. The approach is particularly effective in low-data scenarios and complex reasoning tasks, offering a significant performance boost by effectively ‘teaching’ the agent through demonstration and contextual cues.

The integration of long-term memory, retrieval augmented generation, and in-context learning isn’t simply about expanding an agent’s capabilities; it fundamentally strengthens the entire agentic ecosystem against increasingly sophisticated threats. Recent analyses indicate a disturbingly high 80% Attack Success Rate (ASR) in agent backends compromised by embedded backdoors, highlighting the critical need for resilience. By equipping agents with persistent knowledge and the ability to reason with external information, these enhancements dramatically reduce vulnerability. This proactive approach shifts the paradigm from reactive patching to inherent security, enabling agents to identify and neutralize malicious inputs or behaviors before they can propagate. The result is a more robust and adaptable system, capable of weathering attacks and maintaining operational integrity, ultimately fostering trust and reliability in autonomous agent deployments.

Towards Viral Intelligence: The Future of Agentic Systems

The advent of agents capable of reciprocal learning marks a pivotal shift in artificial intelligence, establishing what researchers term a Viral Agent Loop. This isn’t merely about isolated intelligence; it’s the creation of a dynamic ecosystem where one agent’s discoveries and refinements automatically propagate to others, fostering collective advancement at an unprecedented rate. Imagine a network where successful strategies, innovative problem-solving techniques, and newly acquired knowledge aren’t painstakingly re-engineered by each individual agent, but rather, shared and integrated almost instantaneously. This reciprocal learning process accelerates the pace of innovation, allowing the collective intelligence of the network to surpass the capabilities of any single agent, and potentially unlocking solutions to complex challenges previously considered intractable. The potential for exponential growth in capability distinguishes this approach from traditional AI development, promising a future where intelligent systems evolve not through direct programming, but through a self-sustaining cycle of learning and adaptation.

The potential for agents to learn not just independently, but from each other, establishes a powerful mechanism for accelerated progress. This operates through a “viral agent loop,” where the output of one agent is directly fed as input to another, creating a cascading effect of knowledge transfer. Such a system bypasses traditional bottlenecks in information dissemination, allowing insights and solutions to propagate rapidly throughout the network. This collective learning isn’t simply an aggregation of individual intelligence; the interaction itself generates emergent properties, fostering innovation and problem-solving capabilities far exceeding those of any single agent. The speed and scale of this knowledge propagation suggest a future where complex challenges can be addressed with unprecedented efficiency, effectively simulating a distributed, evolving intelligence.

The interconnected nature of agentic systems, while fostering rapid knowledge exchange, introduces significant security challenges; vulnerabilities are no longer isolated incidents but potential epidemics within the network. Recent research, exemplified by the MINJA framework, demonstrates this susceptibility, achieving an alarming Attack Success Rate (ASR) of 76.8% through the injection of malicious records into an agent’s memory via carefully crafted queries. This highlights that a single compromised agent can swiftly propagate misinformation or malicious code, impacting the entire ecosystem. Consequently, developing robust defense mechanisms – including advanced anomaly detection, secure data validation, and resilient memory management – is paramount to realizing the full potential of viral intelligence without succumbing to systemic risks.

A fully realized ecosystem of secure and intelligent agentic systems promises a new era of innovation, extending far beyond current technological limitations. These interconnected agents, capable of learning and adapting collaboratively, hold the potential to revolutionize fields as diverse as scientific discovery, personalized medicine, and complex problem-solving. Imagine automated research teams accelerating the pace of breakthroughs, or customized educational programs tailored to individual learning styles, all driven by the collective intelligence of these agents. Beyond these examples, agentic systems could optimize logistical networks with unprecedented efficiency, design novel materials with specific properties, or even create entirely new forms of art and entertainment, fostering progress and creativity across the human experience.

The exploration of agentic AI within dynamic runtime supply chains reveals an inherent complexity, mirroring the challenges of controlling any system with numerous interacting parts. This pursuit of streamlined automation, while promising efficiency, introduces vulnerabilities susceptible to manipulation. John von Neumann observed, “There is no possibility of absolute security.” The article’s emphasis on a Zero Trust architecture, coupled with runtime verification, acknowledges this inherent risk. Rather than attempting to eliminate all potential threats-an exercise in futility-the proposed defenses aim to minimize the impact of compromise, embracing a pragmatic approach to security that aligns with von Neumann’s insightful realism. The focus isn’t on preventing the inevitable, but on containing its consequences.

Beyond the Horizon

The analysis presented suggests a fundamental truth: autonomy, when grafted onto insufficiently understood systems, simply relocates vulnerability. It does not eliminate it. The current fascination with agentic systems risks repeating past errors-building complexity upon complexity, then feigning surprise when the edifice wobbles. The proposed mitigations-Zero Trust, runtime verification, semantic firewalls-are not solutions, but rather acknowledgements of inherent distrust. They treat the symptoms, not the disease.

Future work must move beyond defensive layering. The core problem isn’t how to secure these agents, but whether such systems truly require the degree of independent action currently envisioned. A reductionist approach is warranted. Can functionality be achieved with simpler, more constrained designs? The field fixates on detecting malicious prompts; perhaps the more fruitful avenue lies in eliminating the need for arbitrary, externally-supplied instruction altogether.

The ultimate question remains unasked: are these systems solving genuine problems, or are they merely demonstrations of what can be built, divorced from practical necessity? If the latter, then the pursuit of “security” becomes a costly exercise in futility. Simplicity, it seems, is not merely a virtue, but a prerequisite for meaningful progress.

Original article: https://arxiv.org/pdf/2602.19555.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- All Skyblazer Armor Locations in Crimson Desert

- Robinhood’s $75M OpenAI Bet: Retail Access or Legal Minefield?

- How to Get the Sunset Reed Armor Set and Hollow Visage Sword in Crimson Desert

- How to Catch All Itzaland Bugs in Infinity Nikki

- Speedsters Sandbox Roblox Codes

- Top 10 Must-Watch Isekai Anime on Crunchyroll Revealed!

- Re:Zero Season 4 Episode 3 Release Date & Where to Watch

- Who Can You Romance In GreedFall 2: The Dying World?

- 9 Highest-Rated Anime on MAL (As of 2026)

- USD CNY PREDICTION

2026-02-24 22:36