Author: Denis Avetisyan

A new perspective on distributed security argues for integrated architectures that move beyond treating core properties like privacy and accountability as separate concerns.

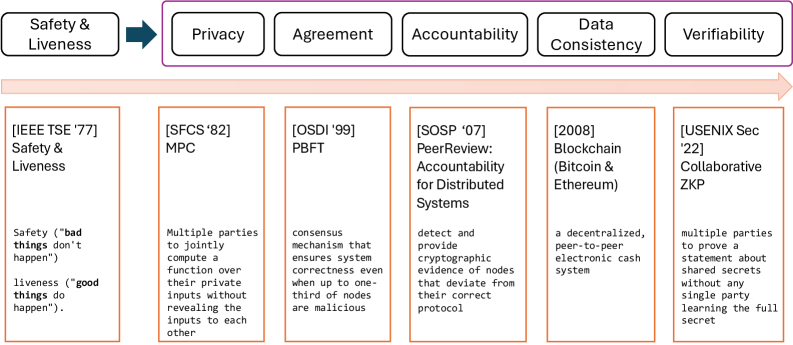

This review explores the evolution of distributed security, advocating for synergistic architectures that combine agreement, consistency, privacy, verifiability, and accountability.

Historically, distributed security has prioritized individual properties in isolation, yet increasingly complex systems demand more holistic defenses. This paper, ‘Distributed Security: From Isolated Properties to Synergistic Trust’, examines the evolution of this field, arguing that future progress hinges on combining foundational properties-agreement, consistency, privacy, verifiability, and accountability-into synergistic architectures. We demonstrate that fusing these elements unlocks capabilities exceeding those achievable through isolated improvements, offering a path toward a unified fabric of trust. Can we systematically discover and harness these synergies to build truly resilient and adaptable distributed systems for emerging applications?

The Erosion of Trust in Centralized Systems

Historically, most systems requiring coordination and exchange have depended on centralized trust – a reliance on intermediaries like banks, governments, or corporations to validate transactions and maintain records. This architecture, while seemingly efficient, introduces inherent vulnerabilities; these central authorities become single points of failure, susceptible to corruption, manipulation, or technical breaches. The concentration of control also allows for censorship and arbitrary decision-making, potentially disadvantaging participants or stifling innovation. Consequently, individuals and organizations must place considerable faith in these institutions, accepting the risk that their interests may not always be aligned with those of the central authority – a precarious position in an increasingly interconnected world.

Blockchain technology presents a paradigm shift in how trust is established and maintained, offering the potential to construct systems independent of traditional intermediaries. By leveraging a distributed, immutable ledger, transactions are recorded and verified across a network of computers, removing the need for a central authority like a bank or clearinghouse. This distributed consensus mechanism ensures transparency and security; any attempt to alter data requires controlling a majority of the network, a computationally expensive and practically improbable feat. Consequently, blockchain facilitates peer-to-peer interactions with reduced risk of fraud or censorship, enabling applications ranging from secure supply chain management and digital identity verification to decentralized finance and voting systems – all operating on the principle of cryptographic proof rather than reliance on a trusted third party.

Consensus as a Foundation: Achieving Distributed Agreement

Consensus protocols are fundamental to the operation of distributed systems, addressing the challenge of achieving agreement on a single state of data across multiple nodes, even in the presence of node failures or network partitions. These algorithms ensure data consistency and reliability by defining a process for proposing, validating, and committing changes to a replicated state. The core function is to guarantee that if a majority of nodes can successfully communicate, they will converge on the same data value, preventing conflicting updates and maintaining system integrity. This is achieved through mechanisms like leader election, proposal replication, and quorum-based voting, allowing the system to continue functioning correctly despite individual component failures.

Initial consensus protocols, prominently including Paxos, while demonstrating theoretical feasibility for achieving distributed agreement, presented significant practical challenges. The complexity of Paxos stemmed from its multi-phase commit process and numerous edge cases requiring meticulous handling to ensure correctness and prevent livelock or safety violations. This intricacy resulted in a steep learning curve for developers and made reliable implementation and verification difficult, hindering its widespread adoption despite its foundational importance in the field of distributed systems. Attempts to implement Paxos often required extensive formal verification or testing to gain confidence in its correctness, increasing development time and cost.

Raft distinguishes itself from earlier consensus algorithms, such as Paxos, by prioritizing understandability and implementability. While achieving the same goal of distributed consensus – ensuring all nodes in a system agree on a single, consistent state – Raft achieves this through a design that separates key concerns like leader election, log replication, and safety. This separation simplifies the overall algorithm and facilitates easier debugging and verification. Specifically, Raft utilizes a strong leader model, where all log entries are appended to the leader’s log and then replicated to followers, streamlining the process and reducing complexity compared to algorithms allowing multiple possible leaders or complex voting schemes. This focus on clarity has led to numerous successful implementations and a wider adoption rate within the distributed systems community.

Beyond Simple Failures: Robustness Against Malice

Crash fault tolerance traditionally focuses on system resilience when individual components fail by ceasing operation. However, practical distributed systems are vulnerable to more complex failure modes beyond simple cessation. These “insidious threats” include scenarios where components continue to operate but produce incorrect results, exhibit unpredictable behavior, or actively attempt to compromise system integrity. Such failures may stem from software bugs, hardware errors that don’t immediately halt operation, or, critically, deliberate malicious actions by compromised or adversarial nodes within the system. Addressing these more subtle failure types requires fault tolerance mechanisms that go beyond simply detecting and replacing unresponsive components, necessitating techniques capable of identifying and mitigating the effects of incorrect or malicious outputs.

Byzantine fault tolerance (BFT) addresses the most challenging failure scenarios in distributed systems by assuming that components can not only fail but also actively attempt to mislead or corrupt the system. Unlike crash fault tolerance, which focuses on component cessation, BFT protocols ensure correct operation even when a subset of nodes sends conflicting information or deliberately provides incorrect results. This is achieved through mechanisms like redundant execution, voting, and cryptographic verification, enabling the system to reach consensus despite the presence of malicious actors. BFT is crucial in environments where security is paramount and the trustworthiness of all components cannot be guaranteed, such as blockchain networks and safety-critical control systems.

Robust fault tolerance is a necessary characteristic of dependable distributed systems because it addresses a wider range of potential failures than crash fault tolerance alone. While crash fault tolerance assumes components fail by simply halting, real-world systems are vulnerable to more complex failures, including Byzantine failures where components continue operating but return incorrect or malicious results. Systems designed for robust fault tolerance must therefore incorporate mechanisms to detect and mitigate both types of failures, ensuring continued operation and data integrity even in the presence of compromised or malfunctioning components. This necessitates redundancy, validation, and consensus algorithms capable of handling arbitrary failures, increasing system complexity but providing a significantly higher level of assurance for critical applications.

The Dawn of Decentralization: Applications and Implications

Prior to 2009, the concept of digital currency existed, but a secure and decentralized implementation remained elusive. Bitcoin’s groundbreaking innovation lay in its successful deployment of blockchain technology – a distributed, immutable ledger – coupled with a novel consensus protocol known as Proof-of-Work. This combination eliminated the need for a central authority, like a bank, to validate transactions. Instead, a network of participants independently verified and recorded transactions, ensuring both security and transparency. By cryptographically linking blocks of transaction data, Bitcoin created a tamper-proof record, effectively demonstrating the feasibility of a peer-to-peer electronic cash system operating without the vulnerabilities inherent in traditional, centralized financial institutions. This initial success laid the foundation for the burgeoning field of cryptocurrency and, more broadly, decentralized technologies.

Ethereum built upon the foundation laid by Bitcoin by introducing smart contracts – self-executing agreements written into code and stored on the blockchain. This innovation transcends the limitations of cryptocurrency, allowing developers to create decentralized applications (dApps) with programmable logic that automate complex processes without intermediaries. Unlike traditional contracts requiring legal enforcement, smart contracts execute automatically when predefined conditions are met, fostering trust and transparency. This capability extends blockchain technology beyond finance, enabling applications in supply chain management, voting systems, digital identity, and a wide range of other fields where secure, verifiable, and automated agreements are crucial. The potential for these dApps to disrupt centralized systems and empower individuals with greater control over their data and transactions represents a significant shift in the digital landscape.

Decentralized systems, by their very nature, necessitate robust accountability mechanisms to foster trust and prevent malicious activity. This is achieved through advanced cryptographic techniques, notably zero-knowledge proofs. These proofs allow one party to verify the truth of a statement to another party without revealing any information beyond the truth itself – essentially proving knowledge without disclosing the knowledge. This innovation is critical because it enables verification of transactions and data integrity on a decentralized network without compromising privacy or security. By ensuring that every action can be verified without revealing sensitive data, zero-knowledge proofs establish a higher degree of confidence in the system’s reliability and encourage wider adoption of decentralized technologies by addressing key concerns surrounding transparency and data protection.

Future-Proofing Trust: Addressing Evolving Threats

Data consistency models dictate how changes to information are propagated and observed across a distributed system, and these models aren’t one-size-fits-all. At one end of the spectrum lies linearizability, offering the strongest guarantee – that every operation appears to occur instantaneously and in a globally consistent order, but at the cost of performance and scalability. Conversely, eventual consistency prioritizes availability and speed by allowing temporary inconsistencies, with the understanding that data will converge to a consistent state over time. The choice between these, and the many models in between – such as sequential consistency and causal consistency – represents a fundamental trade-off. Systems demanding absolute accuracy, like financial transactions, often favor stronger consistency, while those prioritizing responsiveness, such as social media feeds, frequently adopt eventual consistency, demonstrating that the ‘best’ model is fundamentally context-dependent and driven by application-specific requirements.

Current cryptographic algorithms, which underpin secure communication and data protection worldwide, face an unprecedented challenge with the advancement of quantum computing. These algorithms, such as RSA and ECC, rely on the computational difficulty of certain mathematical problems for their security, problems that powerful quantum computers, leveraging principles of superposition and entanglement, are projected to solve with relative ease. This looming vulnerability has spurred significant research into post-quantum cryptography – the development of cryptographic systems that are resistant to attacks from both classical and quantum computers. These new algorithms explore diverse mathematical foundations, including lattice-based cryptography, code-based cryptography, multivariate cryptography, and hash-based signatures, aiming to provide a secure foundation for future digital infrastructure and maintain trust in an increasingly quantum-capable world. The transition to these post-quantum standards is a complex undertaking, requiring careful standardization, implementation, and deployment to safeguard sensitive data against future threats.

The escalating complexity of modern systems demands a shift from ad-hoc security assessments to a more formal, composable approach. Universal composability offers just that – a rigorous framework for dissecting and verifying the security of interconnected components. Rather than treating a system as a monolithic entity, it analyzes how individual parts interact, ensuring that security isn’t lost in translation as complexity grows. Current research, as detailed in the paper, explores the fusion of critical properties – agreement, consistency, privacy, verifiability, and accountability – aiming to create synergistic systems where each element strengthens the others. While a single, quantifiable breakthrough remains elusive, this line of inquiry promises a future where trustworthiness isn’t simply assumed, but demonstrably proven through the secure composition of its parts, bolstering long-term resilience against evolving threats.

The pursuit of distributed security, as detailed in the exploration of synergistic architectures, demands a relentless focus on foundational correctness. It is not enough for a system to simply function; its security must be demonstrably, mathematically sound. This echoes Linus Torvalds’ sentiment: “Most good programmers do programming as an exercise in frustration.” The complexities inherent in achieving agreement, consistency, privacy, verifiability, and accountability across a distributed network are indeed frustrating, but only through rigorous, mathematically-driven design can one hope to build systems where security isn’t an emergent property, but a provable one. The article’s emphasis on integrating these properties, rather than treating them in isolation, highlights this need for a holistic, disciplined approach to system construction.

The Horizon of Distributed Trust

The pursuit of distributed security, as evidenced by this exploration of synergistic architectures, inevitably encounters the limits of formalization. To demand agreement, consistency, privacy, verifiability, and accountability as integrated properties is not merely an engineering challenge, but a mathematical one. Current systems often achieve these properties in isolation, or trade one for another with blunt instruments. The true difficulty lies not in building such systems, but in proving their behavior under adversarial conditions – a demonstration of elegance, rather than mere functionality.

Future work must therefore shift from empirical validation to formal methods. The elegance of a solution is not measured by its performance on testnets, but by the logical necessity of its construction. Investigating the minimal set of axioms required to guarantee these synergistic properties – and, crucially, identifying the inevitable trade-offs revealed by those axioms – represents the next critical step. The goal is not simply a more secure system, but a provably secure one.

Ultimately, the field must confront a disquieting truth: perfect security is an asymptote, approached but never attained. The value, then, resides in understanding the nature of that imperfection, and in constructing systems where the remaining vulnerabilities are both quantifiable and acceptable – a harmony of symmetry and necessity, not a naive belief in absolute protection.

Original article: https://arxiv.org/pdf/2602.18063.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- All Skyblazer Armor Locations in Crimson Desert

- How to Get the Sunset Reed Armor Set and Hollow Visage Sword in Crimson Desert

- All Shadow Armor Locations in Crimson Desert

- Marni Laser Helm Location & Upgrade in Crimson Desert

- All Golden Greed Armor Locations in Crimson Desert

- All Helfryn Armor Locations in Crimson Desert

- Best Bows in Crimson Desert

- Keeping Large AI Models Connected Through Network Chaos

- All Icewing Armor Locations in Crimson Desert

- How to Craft the Elegant Carmine Armor in Crimson Desert

2026-02-23 09:26