Smarter Aggregation: Minimizing Communication Costs in Polynomial Computing

![Compressed sensing, via the exploitation of orthogonality conditions detailed in Theorem 1 and minimized in Theorem 2, achieves feasibility with fewer responses-specifically [latex]N \leq d(K-1)[/latex]-than baseline individual decoding schemes, which require at least [latex]N \geq d(K-1) + 1[/latex] responses to ensure a viable solution, thereby demonstrating a fundamental efficiency gain in data acquisition.](https://arxiv.org/html/2601.10028v1/x1.png)

New research defines the limits of efficient data aggregation, revealing how to dramatically reduce the number of responses needed for weighted polynomial computations.

![Compressed sensing, via the exploitation of orthogonality conditions detailed in Theorem 1 and minimized in Theorem 2, achieves feasibility with fewer responses-specifically [latex]N \leq d(K-1)[/latex]-than baseline individual decoding schemes, which require at least [latex]N \geq d(K-1) + 1[/latex] responses to ensure a viable solution, thereby demonstrating a fundamental efficiency gain in data acquisition.](https://arxiv.org/html/2601.10028v1/x1.png)

New research defines the limits of efficient data aggregation, revealing how to dramatically reduce the number of responses needed for weighted polynomial computations.

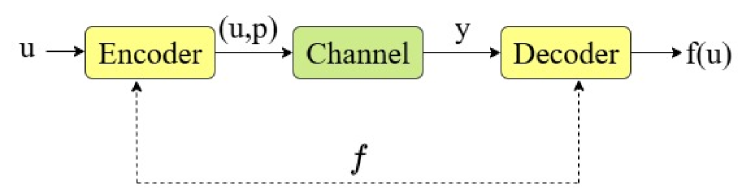

A novel coding scheme dynamically optimizes data access across diverse storage systems, accounting for both varying server capabilities and differing data request patterns.

As large language models become increasingly integrated into healthcare, understanding and mitigating the unique privacy risks across their entire lifecycle is paramount.

![For the sequence ‘AGGTCAGGTC’, vector partitioning yields distinct representations-0111001110, 0001100011, and 0110101101-demonstrating that additive combinations of these partitioned vectors can reconstruct the original data, as evidenced by [latex]01110 + 00011 = 01101[/latex] and [latex]1110 + 00011 = 01101[/latex], suggesting a decompositional structure inherent in the sequence’s representation.](https://arxiv.org/html/2601.10256v1/paper_figure.png)

A new study explores coding schemes designed to reliably transmit data even when information is inherently repeated and prone to errors.

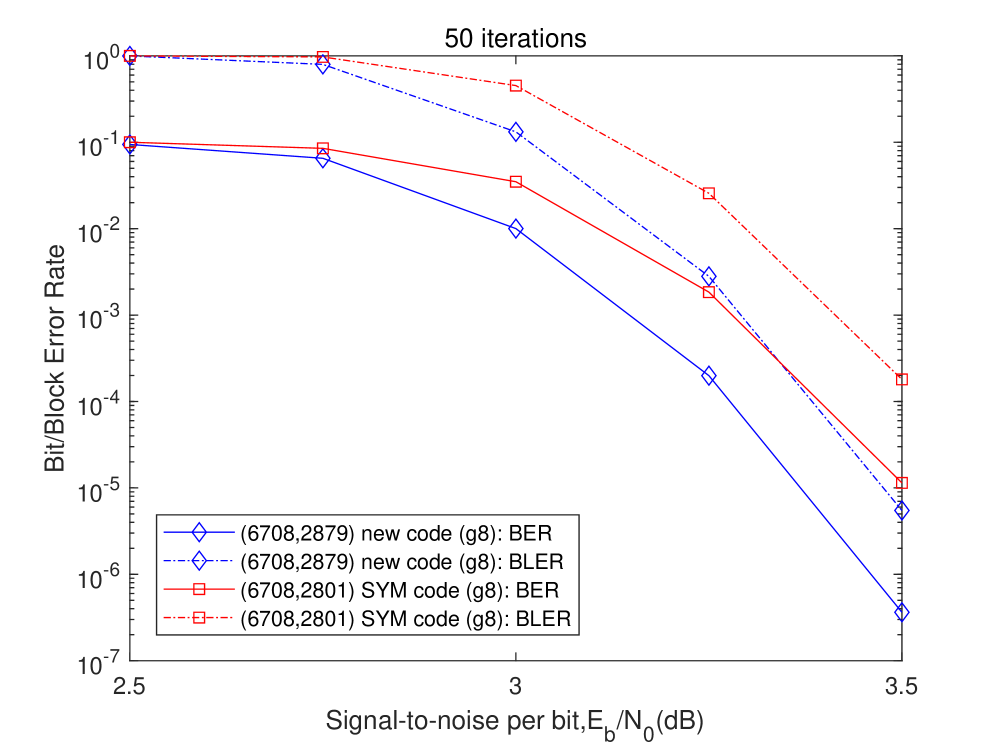

Researchers have developed innovative algebraic techniques for constructing QC-LDPC codes that achieve improved performance with significantly reduced code lengths.

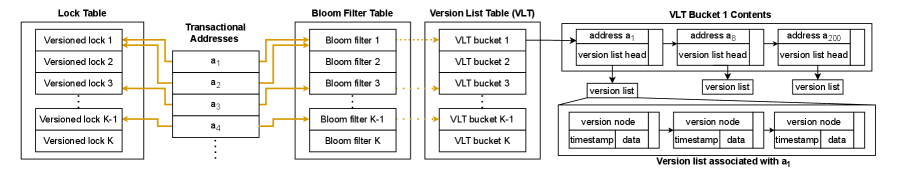

Researchers have developed Multiverse, a transactional memory system that intelligently balances optimistic and multiversioned concurrency control to boost performance across diverse workloads.

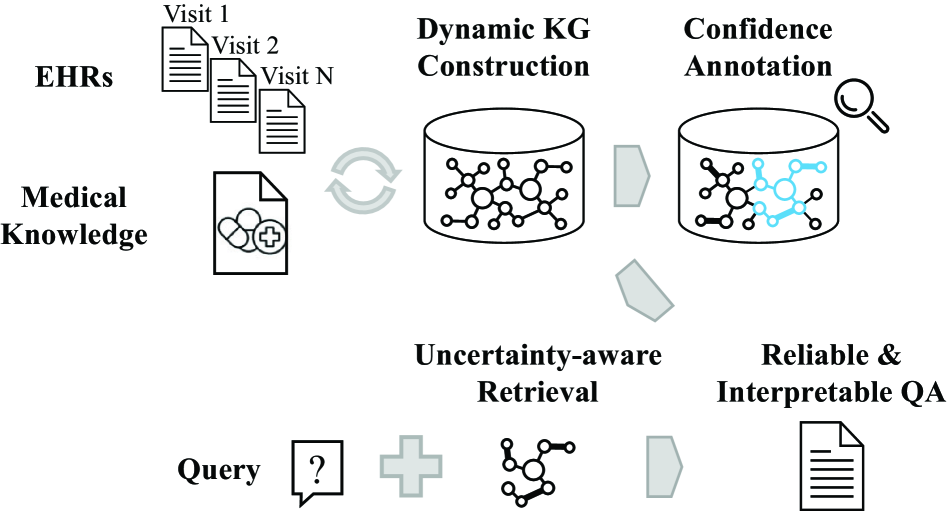

A new approach to knowledge representation allows question answering systems to dynamically adapt to evolving information and express the certainty of their responses.

This review explores how coded caching can be optimized to minimize communication costs in multi-user systems where retrieving data from different sources has varying expenses.

Researchers have developed and analyzed function-correcting codes designed to ensure accuracy even when dealing with Boolean functions where outputs are heavily skewed.

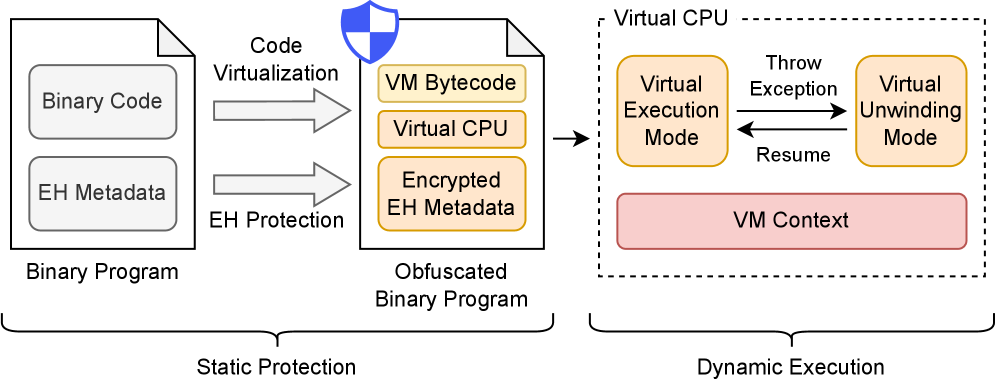

Researchers have developed a novel virtualization-based obfuscation framework that safeguards code and exception handling mechanisms against reverse engineering.