Reasoning Models: How Easily Can They Be Led Astray?

![Confidence-aware generation ([latex]CARG[/latex]) consistently stabilizes performance across standard large language models, yet fails to benefit-and sometimes diminishes the accuracy of-models demonstrating substantial reasoning capabilities.](https://arxiv.org/html/2602.13093v1/x2.png)

New research reveals that while large reasoning models demonstrate improved consistency, they remain surprisingly vulnerable to subtle manipulation in extended conversations.

![Confidence-aware generation ([latex]CARG[/latex]) consistently stabilizes performance across standard large language models, yet fails to benefit-and sometimes diminishes the accuracy of-models demonstrating substantial reasoning capabilities.](https://arxiv.org/html/2602.13093v1/x2.png)

New research reveals that while large reasoning models demonstrate improved consistency, they remain surprisingly vulnerable to subtle manipulation in extended conversations.

New research provides a mathematically proven method for training multi-agent systems to make stable, risk-aware decisions in complex environments.

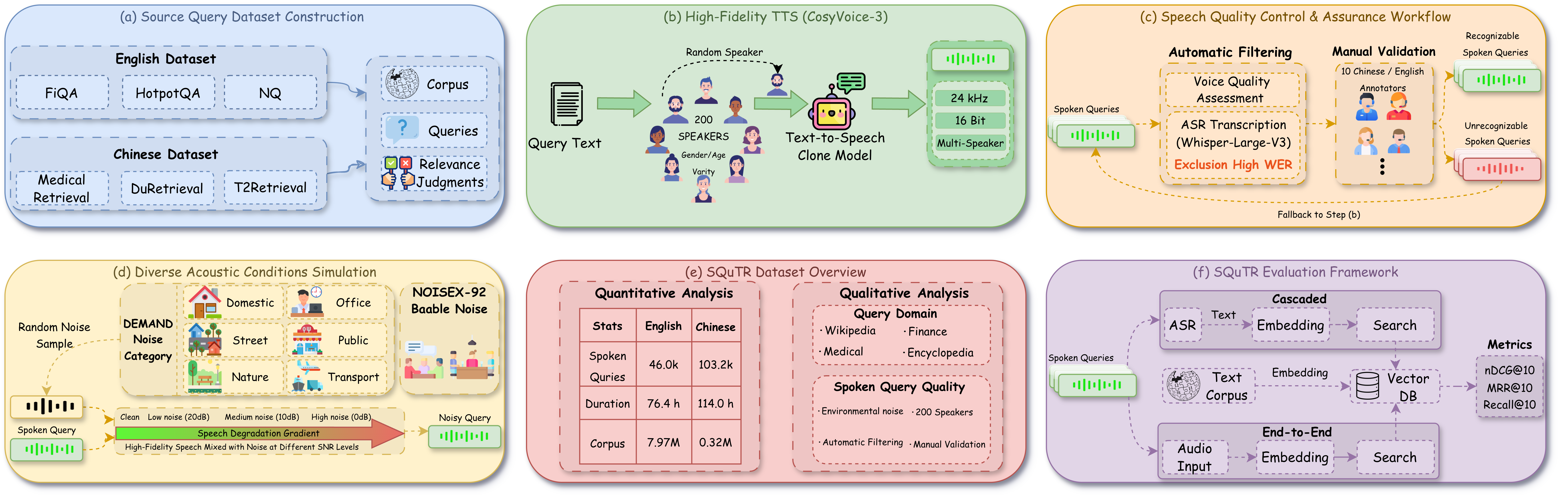

A new benchmark assesses how well spoken query retrieval systems perform in real-world acoustic conditions, moving beyond ideal lab settings.

This review explores the essential cryptographic techniques that guarantee both the privacy of voters and the integrity of electronic elections.

New research shows how to effectively remove specific information from large language models even after they’ve been compressed for efficient deployment.

A new formal language, CryptoChoreo, enables rigorous modeling and automated verification of complex, stateful cryptographic protocols.

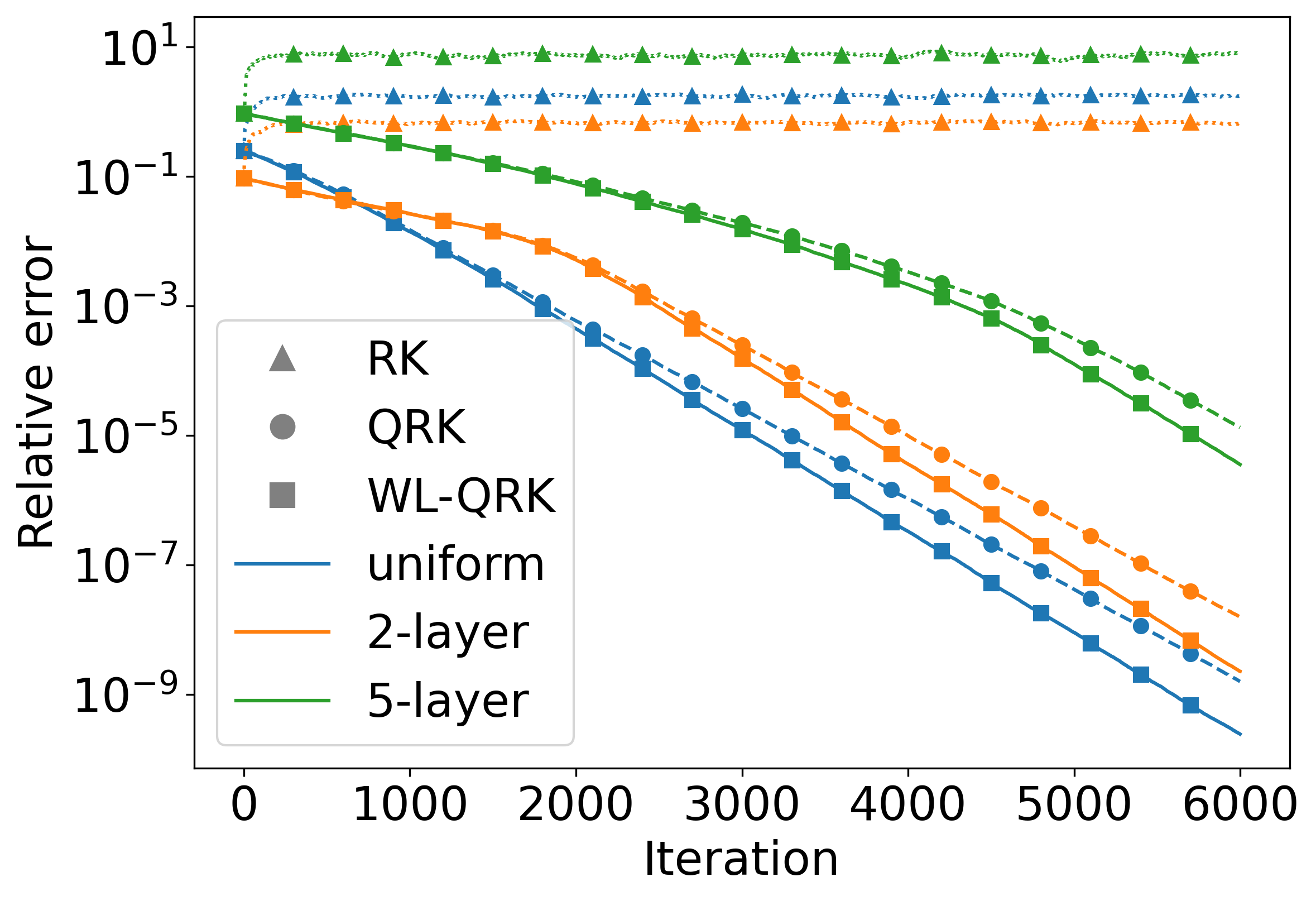

A new iterative method intelligently filters out unreliable data, accelerating and improving the robustness of solutions for linear equations affected by noise and errors.

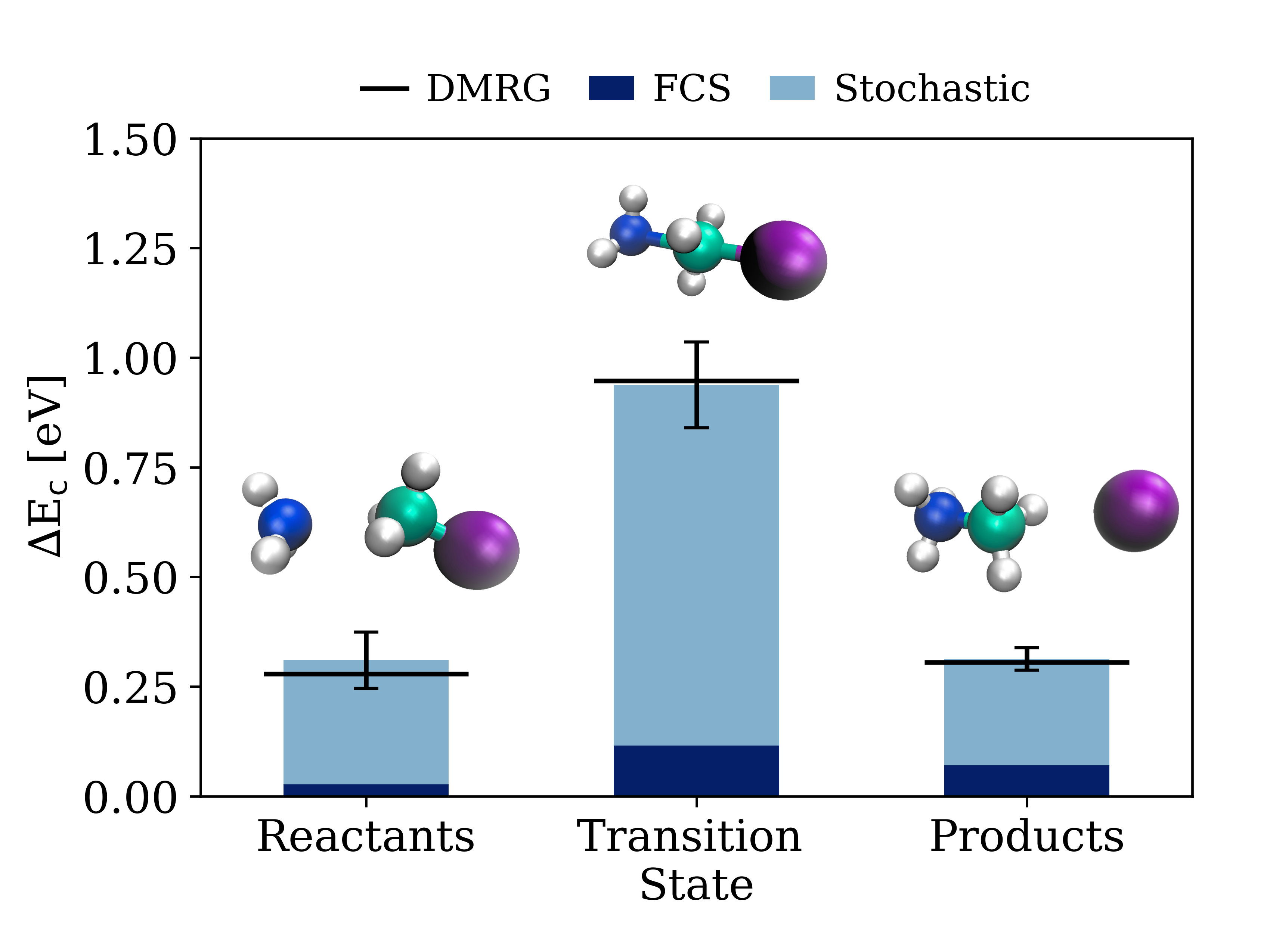

A new computational method efficiently tackles the complex problem of calculating electronic correlation energies in large, complex materials.

![Figure 1:Dynamic Blacklisting A system dynamically adjusts its acceptance criteria, discarding previously viable options-a process akin to iteratively refining a solution space by strategically eliminating unproductive pathways-and prioritizing alternatives based on real-time performance feedback, effectively transforming a static rule set into a self-correcting mechanism where [latex] \text{Acceptance} = f(\text{Performance}, \text{Time}) [/latex].](https://arxiv.org/html/2602.11407v1/dynamic_blacklisting.jpg)

This review explores a robust security architecture designed to protect containerized systems from the growing threat of low-rate Distributed Denial of Service attacks.

New research demonstrates that maintaining user privacy in streaming data requires surprisingly large memory resources, fundamentally limiting the efficiency of certain algorithms.