Hijacking the Hand-Off: Securing AI Routing Systems

As large language models become integrated into complex workflows, the systems that direct those models are increasingly vulnerable to attack.

As large language models become integrated into complex workflows, the systems that direct those models are increasingly vulnerable to attack.

![The study of a deformed toric code reveals a “multi-critical” point-where two Ising transitions converge on the self-dual line [latex]h_x = h_z[/latex]-leading to a topologically trivial phase characterized by spontaneous self-duality symmetry breaking, demonstrating how complex systems can transition between ordered states through precisely defined critical configurations.](https://arxiv.org/html/2601.20945v1/x1.png)

A new theoretical framework reveals a deeper connection between self-dual Higgs transitions and the emergence of exotic topological orders.

![The analysis simultaneously fits measured decay rates and forward-backward asymmetries of [latex]D^{0(+)}\to\bar{K}\ell^{+}\nu_{\ell}[/latex], demonstrating that inclusion of scalar current contributions is crucial for accurately modeling the ratios of differential decay rates [latex]\mathcal{R}_{\mu/e}[/latex].](https://arxiv.org/html/2601.21185v1/x754.png)

New measurements of D meson decays are providing crucial constraints on fundamental parameters and testing the limits of lepton flavor universality.

![A safety filter, learned solely from system transitions, employs a safety critic to map states and constraints to safety valuations and their derivatives, which then inform a quadratic programming solver-in conjunction with initial reference inputs-to generate demonstrably safe control actions, as evidenced by its ability to stabilize an inherently unstable system even when subjected to aggressive [latex] \pm 1 [/latex] reference commands.](https://arxiv.org/html/2601.21297v1/Figures/Thumbnail_v5.png)

A new data-driven approach enables safe control of complex systems, even when their internal dynamics are unknown.

Researchers have expanded the capabilities of automated protocol analysis to encompass the full algebraic power of Diffie-Hellman groups, unlocking more robust security proofs.

A new approach distributes the risk and responsibility of cryptographic key management, bolstering security for next-generation digital signatures.

![Robust principal component analysis successfully recovers underlying low-rank plus sparse structure in synthetic matrices of size 100x80 with high probability, achieving a recovery success rate consistently exceeding 99.9% as quantified by a normalized reconstruction error of less than [latex]10^{-3}[/latex] across 10,000 independent trials.](https://arxiv.org/html/2601.21333v1/x4.png)

New research establishes rigorous guarantees for finding optimal solutions in robust principal component analysis, even when dealing with challenging, non-convex problems.

A new architecture minimizes the performance overhead of homomorphic encryption, paving the way for faster and more practical privacy-preserving machine learning.

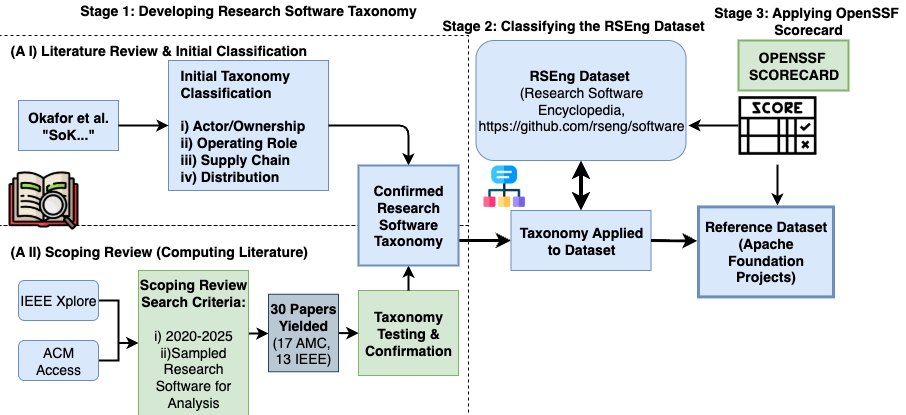

A standardized taxonomy for research software supply chains is crucial for consistently evaluating vulnerabilities and mitigating risks in academic and scientific computing.

Researchers have developed a decoding framework that empowers large language models to self-assess and refine their outputs, dramatically reducing factual errors.