Introduction

Okay, so this is the last piece of a really cool project I’ve been working on with one of my university professors. Basically, he gave a talk about how video games can actually teach us a lot about history, and he came to me with a bunch of questions. I took all my answers, added some extra thoughts and a few new topics we discussed, and put it all together. I’m hoping this will be interesting for gamers and people who study history – a good mix for everyone!

In this final section, I’d like to talk about the future of history and how we – players, journalists, and educators – should prepare. It’s increasingly important to understand digital media, and universities offering history degrees should think about adding courses specifically on historical media. If you’re interested in this topic, I recommend reading the first and second parts of this discussion for a more complete understanding.

Just a quick note: these questions come from someone who isn’t a video game expert, but is genuinely interested in learning about them. That’s why a few might seem unusual or a little off-beat. This actually makes the discussion more insightful, offering a fresh perspective from an academic who is exploring the world of video games and openly questioning its complexities.

Historical Media, Digital Literacy, and the Limitations of Gaming

What’s “digital literacy” all about? And why it might be a limiting factor?

As a huge fan of all kinds of media, I’ve always thought it’s interesting how easy it is to get into books and movies. All you really need for a book is, well, the book itself, and they’re usually pretty affordable. And almost everyone has had access to a TV for decades! But gaming? It’s a little different. There’s a bit more to it than just picking something up and starting – it feels like there’s a higher hurdle to jump before you can really get involved.

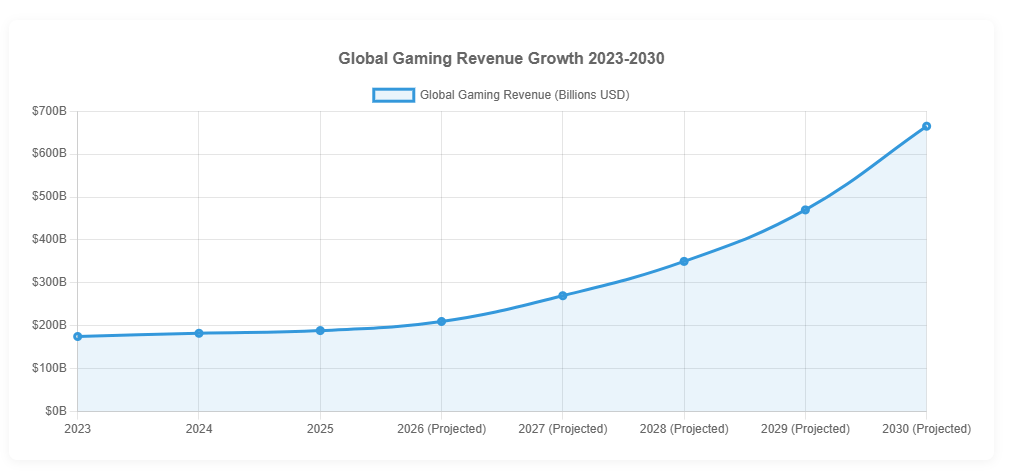

Over the past twenty years, gaming has become incredibly popular, with almost half the world’s population playing some type of game – often on mobile devices. However, there’s a big difference in gaming habits between generations, with each older generation playing less than the one after it. This trend could lead to gaming becoming as common as watching TV or reading books in the next 10 to 15 years, as Gen Alpha – over 80% of whom play video games weekly – starts entering the workforce.

The challenges for gaming don’t stop with just one issue. The player base is spread out across many different platforms and types of games. Mobile gaming is the most popular, attracting over 80% of players. PC gaming follows with 26%, and consoles with 18%. These numbers add up to more than 100% because many gamers – around 70% – play games on multiple platforms.

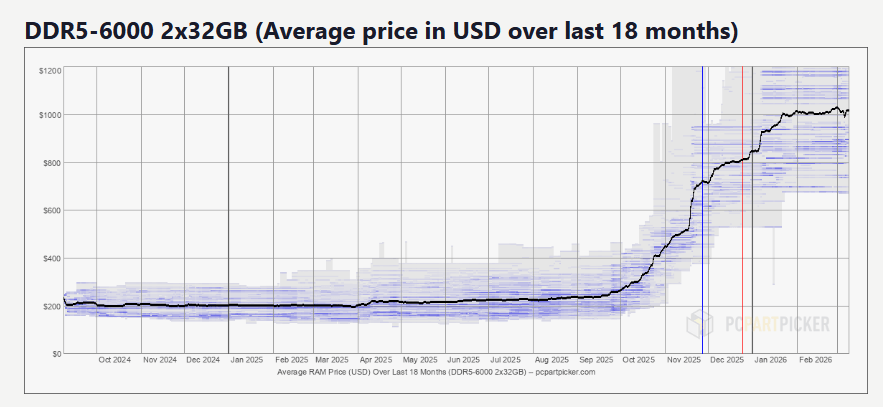

Gaming genres are particularly unpredictable – what’s popular can change quickly from year to year and even depending on where you are. We’ve seen trends come and go: flight simulators and strategy games were once dominant, then first-person shooters, role-playing games, and massive multiplayer online games had their moment. MOBAs rose in popularity, and survival games and ‘Souls-like’ games have also had their turns. This cycle of trends isn’t unusual – it happens with books, movies, and music too. However, games are significantly more expensive than these other forms of entertainment. A new game typically costs around $50, while a book might be $5-$10 and a streaming subscription around $15. Some argue that games offer more entertainment per hour, making them cheaper overall, but people outside the gaming world don’t usually think about it that way. The initial cost is still high, and you might not even be sure you’ll enjoy the game enough to make it worthwhile.

Computers and laptops are quite expensive, adding to existing financial burdens. Even basic models start around $800, while more powerful machines can cost around $2000.

Let’s look at the gaming world as it stands in 2026. Luckily, younger generations are growing up with technology, so digital skills aren’t as much of a hurdle as they used to be. However, it’s still important to acknowledge that people have different levels of tech experience. Even among gamers, some are tech experts, while others just want to play a few games and struggle with even basic troubleshooting. Still others might be comfortable with playing but unfamiliar with more advanced tools like modding. Because digital skills vary so widely, here are three essential things I believe history professors should understand when incorporating video games into their teaching.

- Level 1: Be familiar with working with a computer, installing, running, and troubleshooting games;

- Level 2: Be informed about the games you’ll be running. Know a game’s limitations and strong points;

- Level 3: Be capable of modding the game (if applicable) or using in-game editors to fit whatever message you’re trying to pass.

Professors who can meet three key requirements can transform a dull class into an engaging and worthwhile experience. Instead of simply lecturing about a concept – like a military tactic – why not use a game to demonstrate it in a dynamic way? While implementing these changes requires effort and multiple steps, even a basic understanding of these three areas would resolve most problems and ensure students have a positive learning experience.

Why should Universities care about games, at all?

With a background in both History and Media studies, I believe universities should offer a course examining how history is portrayed in different media. This wouldn’t be a course about the history of media itself – those already exist. Instead, it would analyze films, books, and games that depict historical events, looking at what they get right and wrong, and how media shapes our understanding of the past over time.

Throughout history, many myths have emerged, and some of these have even become accepted as truth, especially within gaming. We’ve been looking at these instances where fictional stories are now mistakenly believed to be real historical events.

What do games need to be consider historical?

It’s surprising how little thought has been given to what makes a video game truly “historical.” Many games claim historical accuracy, but simply setting a game in the past doesn’t make it a reliable representation of history. Games like Age of Empires 2 and Call of Duty, while based on real periods, don’t necessarily teach history well. We need to move beyond just identifying historical settings and instead define what criteria a game needs to meet to be considered a good and accurate portrayal of the past.

Determining whether a game truly qualifies as ‘historical’ is tricky. It’s not enough for a game to simply take place in the past. In fact, I don’t think anyone can create a perfect definition of what makes a game ‘historical,’ as any attempt will inevitably have its weaknesses. Nevertheless, I’ll share my thoughts on the matter.

- Criteria 1: Temporal setting – It needs to be set in a real, well-defined historical setting (i.e., 15th-century medieval England). The time and geographical scope should not be limited.

- Criteria 2: The game’s rules must emulate the constraints and rules of the systems (economic, social, militarily, techonological, etc.) of the time and place it’s trying to recreate.

- Criteria 3: The player must have agency to change historical events the majority of the time.

- Criteria 4: The impact of this agency needs to produce historically plausible outcomes.

- Criteria 5: It must have some semblance of material fidelity, such as language, technology, architecture, clothes, and art, depending on the level of abstraction of the game (the lesser the amount of abstraction, the higher the level of material fidelity).

While there are valid points on both sides of each aspect, this is a strong proposal that addresses most key areas and offers a good starting point for further conversation. It’s a sensitive issue that deserves a more detailed examination than is possible here. As I mentioned, it’s a fascinating topic for anyone considering a challenging doctoral research project.

Games do have limitations. What are some of those?

Games always face challenges, but creating the right balance between realism and fun is particularly difficult. It’s a long-standing debate whether making a game more realistic also makes it harder to play, simply because more complex and interconnected systems can be overwhelming. While this was often true, game design is improving. Developers are now focusing more on user experience and using automation to create games that feel authentic without sacrificing engaging gameplay. Europa Universalis V is a recent and excellent example of this successful approach.

The biggest challenge lies in finding the right balance between historical accuracy and player freedom. History is fixed, so how do we create a game that allows players to explore ‘what ifs’ without feeling constrained? I believe we’ve been approaching this problem incorrectly. Consider the Battle of Normandy: should players be forced to replay it exactly as it happened? Perhaps, but games are most engaging when players are truly in charge, not simply following a predetermined path. While some games, like first-person shooters or smaller-scale titles focused on specific moments, benefit from a more rigid, historical approach, that doesn’t work for large-scale strategy games. These games span decades or even centuries, offer continuous gameplay, and will inevitably diverge from history over time. The further players get from the game’s starting point, the more likely it is that events will differ from what actually happened.

A good game should give players everything they need to begin a scenario – like armies, characters, and choices – and then let the situation unfold naturally. While the outcome doesn’t need to be perfectly historical, it shouldn’t be completely unbelievable. Unfortunately, many games don’t even offer that level of freedom.

Then, how do we balance free player agency and historical facts?

The idea of player agency and altered historical outcomes only becomes an issue if you view them as negative. But why should they be? Historical games don’t change history – they let us experience it. The strength of these games lies in their interactive nature, and that inherently means no action within the game will ever be truly historical – and that’s perfectly fine. The point isn’t to rewrite the past, but to immerse players and students in the conditions that led to historical events, helping them understand the choices made and how things unfolded.

Conclusion

I’m glad you’ve been following along with this series, and I appreciate those of you who’ve asked about when it will end. I still believe universities need to do much more to effectively use media – particularly video games – in their teaching. There’s a huge potential to make classes more engaging and truly educational, and who knows, maybe even inspire some students to become fans of games like those made by Firaxis!

pport Firaxis

Read More

- All Shadow Armor Locations in Crimson Desert

- How to Get the Sunset Reed Armor Set and Hollow Visage Sword in Crimson Desert

- Best Bows in Crimson Desert

- All Golden Greed Armor Locations in Crimson Desert

- Wings of Iron Walkthrough in Crimson Desert

- How to Craft the Elegant Carmine Armor in Crimson Desert

- All Helfryn Armor Locations in Crimson Desert

- How To Beat Ator Archon of Antumbra In Crimson Desert

- Keeping Large AI Models Connected Through Network Chaos

- Sakuga: The Hidden Art Driving Anime’s Stunning Visual Revolution!

2026-03-12 13:45