The $150M Crypto Scam Feds Finally Shut Down – Inside the Dark World of Digital Ponzi Sharks

From the growing bipartisan momentum behind the CLARITY Act, which has secured

From the growing bipartisan momentum behind the CLARITY Act, which has secured

The diamond proxy, that glittering jewel of modularity, had a soft spot. Its SafeOwnable Facet, a function with more charm than caution, allowed ownership to be reassigned via a path that neglected to note its own initialization. Thus, the door remained open, and the attacker, a digital ghost at address 0x9f4…d5ca, slipped in unnoticed.

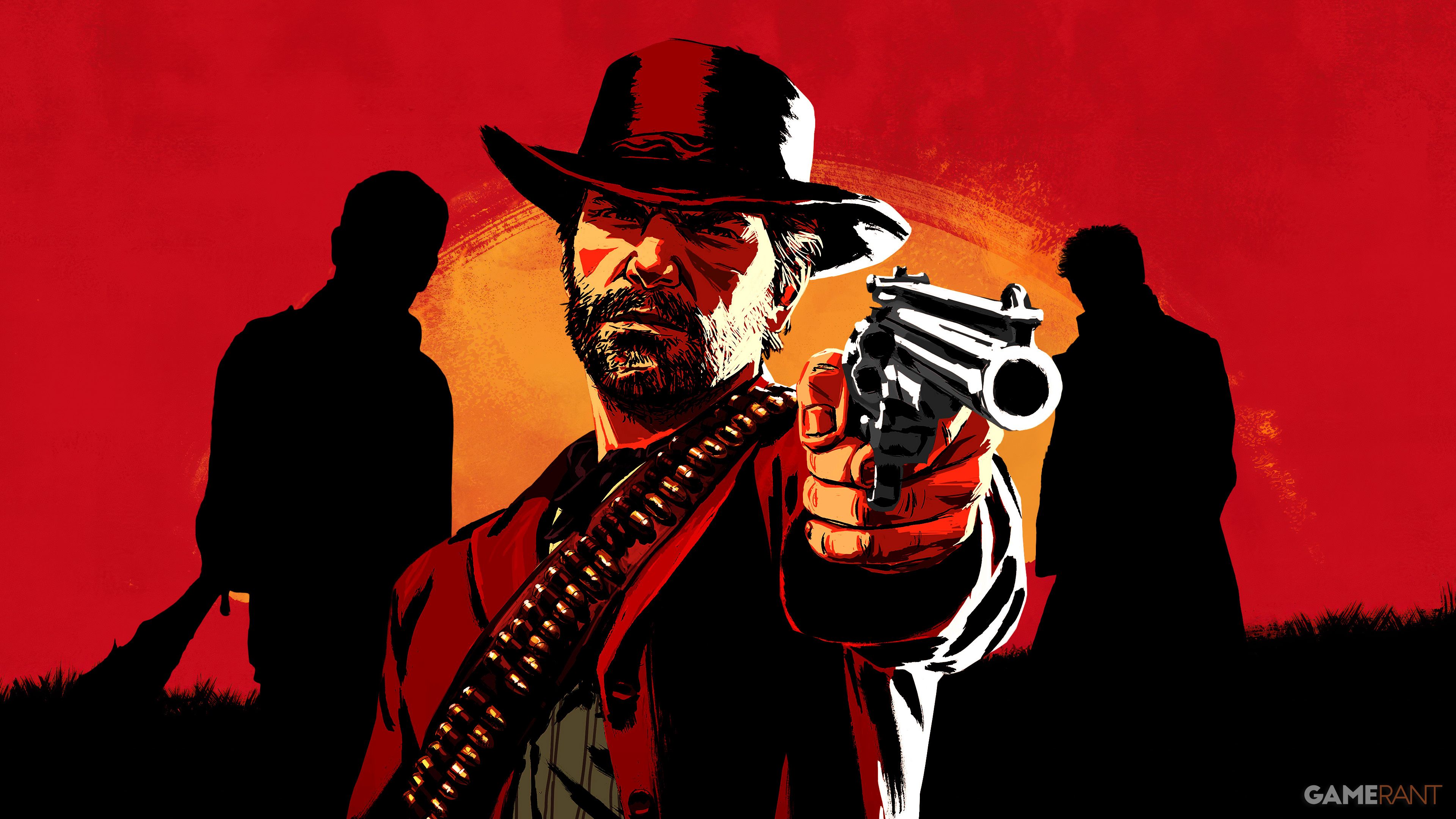

Red Dead Redemption 2 is widely considered one of the best western games ever made. It follows Arthur Morgan, a member of the Van der Linde gang, as the era of the Wild West comes to a close. The game is celebrated for its fantastic gameplay and incredible detail, but many players connect with it most through its compelling story and memorable characters. Now that actors have already brilliantly portrayed these characters, fans are having a hard time picturing anyone else taking on the roles.

If Daisy isn’t at the quest location when you get there, beat up the enemies nearby and then restart your game. When the game loads again, she should be there, allowing you to start the Neverness to Everness quest. See below for detailed instructions.

Saddles offer only a little bit of protection, and there aren’t any other protective gear options right now, so don’t expect to fully armor your mounts for battle. However, we’ve listed the locations of all the Saddlery shops in Crimson Desert, along with the unique saddle each one sells.

Even though One Piece has introduced countless unique abilities through Devil Fruits, there are still some powers that would fit perfectly into the story but haven’t appeared yet. This article will explore those missing Devil Fruits and speculate on which characters might possess them.

Name That Game Easy (15s)Medium (10s)Hard (5s) — QUESTION 1/1 Results 0 — [ [ 1, “image”, “https://static0.gamerantimages.com/wordpress/wp-content/uploads/2026/03/second-sight.jpg”, “Which game is this?”, “Second Sight”, “Psi-Ops: The Mindgate Conspiracy”, “Control”, “Alan Wake” ], [ 2, “image”, “https://static0.gamerantimages.com/wordpress/wp-content/uploads/2024/12/need-for-speed-most-wanted-in-game-screenshot.jpg”, “Which game is this?”, “Need for Speed: Most Wanted”, “Burnout Paradise”, “Midnight Club 3: DUB Edition”, “Juiced” ], [ … Read more

— Daily Crossword — Loading… 00:00 Clues Results 0 — More Games{ “2026-05-13”: [ { “id”: “1”, “direction”: “down”, “start”: “B1”, “end”: “B7”, “solution”: “ELYSIUM”, “clue”: “2019 narrative RPG set in Revachol. Disco ___ (7)” }, { “id”: “2”, “direction”: “down”, “start”: “D1”, “end”: “D6”, “solution”: “TYCOON”, “clue”: “Genre-defining 1994 transport simulation game by Chris … Read more

— Daily Crossword — Loading… 00:00 Clues Results 0 — More Games{ “2026-05-13”: [ { “id”: “1”, “direction”: “down”, “start”: “B1”, “end”: “B15”, “solution”: “PROFANEDCAPITAL”, “clue”: “The subterranean city found in the Irithyll Dungeon area of Dark Souls 3 (8,7)” }, { “id”: “2”, “direction”: “down”, “start”: “D1”, “end”: “D15”, “solution”: “JAMESSUNDERLAND”, “clue”: “The grief-stricken … Read more

Nintendo began releasing amiibo figures in 2014 with the launch of Super Smash Bros. for Wii U. Now, over a decade later, they’re still planning to release more, with new figures scheduled through 2026. In July, we’ll see amiibo based on Resident Evil Requiem, and figures inspired by Splatoon Raiders and Kirby Air Riders are also coming soon.