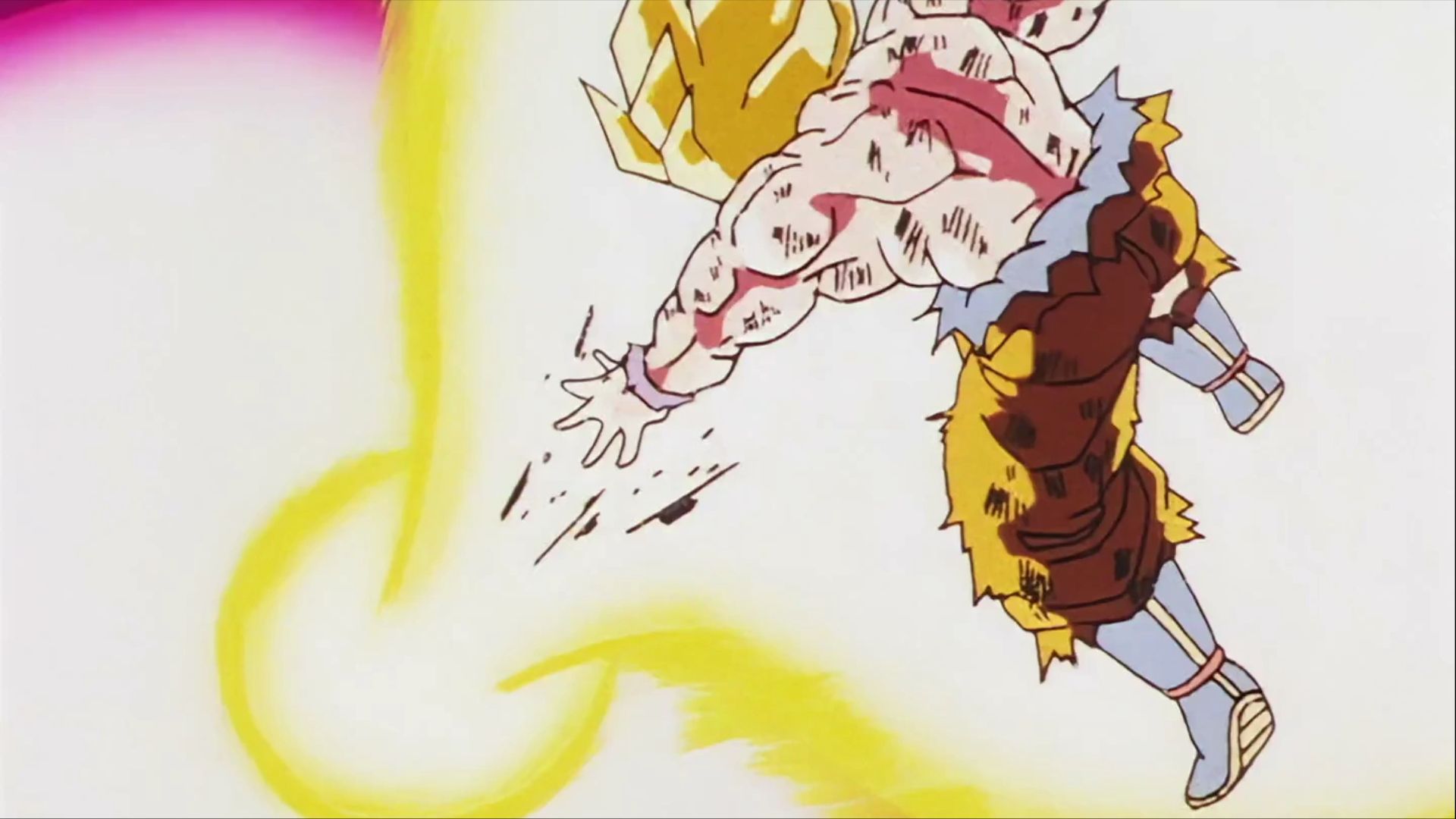

9 Strongest Blood Users in Shonen Anime

Some shonen anime characters have incredible abilities involving blood manipulation, going far beyond simple control. These standouts can use their blood as a weapon, heal quickly, or even mimic others. Because of this, the most skilled blood users in shonen anime are incredibly dangerous and should never be underestimated.