Author: Denis Avetisyan

A new approach to constructing quantum kernels using spectral phase encoding demonstrates increased resilience to noise, offering a pathway to more reliable quantum machine learning.

This review details how spectral phase encoding enhances kernel performance, particularly with datasets possessing inherent spectral structure, and explores its robustness against noise compared to classical and other quantum kernel methods.

Despite the promise of quantum machine learning, the vulnerability of quantum kernel methods to data corruption remains a critical challenge. This work, ‘Spectral Phase Encoding for Quantum Kernel Methods’, introduces and analyzes a novel quantum feature construction-Spectral Phase Encoding (SPE)-combining discrete Fourier transforms with diagonal phase embeddings to enhance robustness. Our results demonstrate that SPE significantly mitigates performance degradation under noise compared to alternative quantum and classical kernel approaches, particularly for datasets with inherent spectral structure. Does this robustness-first approach to quantum kernel design offer a viable pathway towards practical, noise-tolerant quantum machine learning in the near term?

The Inevitable Echo: Kernel Methods and the Limits of Classical Computation

Kernel methods represent a cornerstone of modern machine learning, providing a powerful means to analyze complex, non-linear data. These techniques implicitly map data into higher-dimensional spaces – often without explicitly calculating the transformation – enabling algorithms to identify intricate patterns and relationships. This ‘kernel trick’ is fundamental to algorithms like Support Vector Machines and Gaussian Processes, allowing them to effectively handle data where simple linear models fall short. By defining similarity measures – the kernels themselves – between data points, these methods sidestep the challenges of directly operating in high-dimensional feature spaces. The versatility of kernel methods stems from the ability to tailor these kernels to specific data types and problem structures, making them indispensable tools across a broad spectrum of applications, from image recognition and natural language processing to bioinformatics and financial modeling.

Traditional kernel methods, while powerful tools in machine learning, encounter significant hurdles when dealing with the complexities of modern datasets. As dimensionality increases – meaning the number of features describing each data point grows – the computational cost of evaluating kernel functions rises dramatically, often scaling exponentially with the number of dimensions. This phenomenon, known as the ‘curse of dimensionality’, hinders the ability of these methods to effectively generalize from training data to unseen examples. Furthermore, the storage requirements for the kernel matrix – which represents the pairwise similarities between all data points – become prohibitive for large datasets, limiting the scalability of these techniques and necessitating approximations that can sacrifice accuracy. Consequently, researchers are actively exploring alternative approaches to overcome these limitations and unlock the full potential of kernel-based learning.

Quantum Kernel Methods represent a paradigm shift in machine learning by harnessing the principles of quantum computation to address the computational bottlenecks inherent in classical kernel techniques. Traditional kernel methods, while powerful, struggle with the exponential growth of computational complexity as data dimensionality increases; evaluating the kernel function-which measures the similarity between data points-becomes prohibitively expensive. Quantum algorithms offer the potential to compute these kernel functions exponentially faster in certain cases. By encoding data into quantum states and utilizing quantum interference, these methods can efficiently assess data similarity without explicitly calculating the high-dimensional feature space. This approach allows for the processing of more complex datasets and opens doors to tackling machine learning problems previously intractable for classical computers, promising significant advancements in areas like image recognition, drug discovery, and materials science.

Quantum Kernel Methods stand to revolutionize machine learning tasks by dramatically accelerating computations and enhancing predictive power. Classical kernel methods, while effective, struggle with the exponential growth of computational demands as dataset dimensionality increases; this limitation hinders their ability to discern complex patterns in high-dimensional data. By harnessing the principles of quantum mechanics, specifically quantum superposition and entanglement, these novel methods offer the potential to compute kernel functions – which quantify the similarity between data points – with exponential speedups. This leap in efficiency isn’t merely theoretical; it translates directly into improved performance on crucial machine learning applications like image classification, anomaly detection, and complex regression problems, enabling the analysis of datasets previously considered intractable and potentially unlocking new insights in fields ranging from drug discovery to materials science.

Encoding the Echo: Spectral Phase Encoding and the Quantum Feature Map

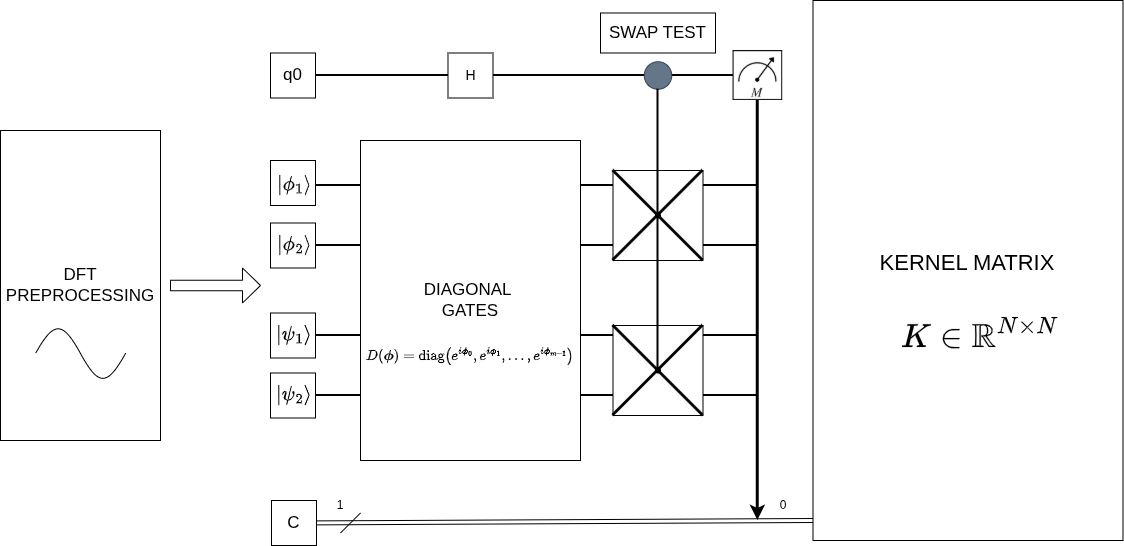

Spectral Phase Encoding generates quantum kernels through the combined application of a Discrete Fourier Transform (DFT) and diagonal quantum gates. The DFT serves to decompose data into its constituent spectral coefficients, effectively transforming the input data into the frequency domain. These spectral coefficients are then directly encoded as the angles of rotation for a series of diagonal quantum gates – typically R_z gates – applied to the qubits. The resulting quantum state represents a kernel feature map where each spectral coefficient modulates the phase of the corresponding qubit, establishing a direct correspondence between the input data’s spectral representation and the quantum state’s phase encoding. This process allows for the construction of quantum kernels without the explicit need for complex quantum circuits, relying instead on efficient classical DFT computations combined with relatively simple quantum operations.

Spectral Phase Encoding utilizes the inherent properties of quantum states to represent data efficiently. Specifically, the amplitudes of the Discrete Fourier Transform (DFT) of an input vector are encoded as the phases of a quantum state. This is achieved by mapping each spectral coefficient, obtained from the DFT, to the phase angle of a corresponding quantum gate, typically a diagonal gate such as R_z. The quantum state then becomes a superposition of basis states, with each state’s phase directly reflecting a spectral coefficient. This encoding allows for a compact representation of the spectral data within the quantum system, potentially reducing the resources required for subsequent quantum computations compared to directly encoding the amplitudes.

Spectral Phase Encoding builds upon established Data Encoding techniques by translating classical data into the amplitudes of a quantum state, but crucially extends this by utilizing the phase of the quantum state to represent kernel feature map components. This approach allows for a more compact and expressive representation of complex kernel functions within the quantum computational framework. Specifically, the encoding process maps data points to a higher-dimensional feature space defined by the kernel, and the phase encoding component enhances the capacity of this feature map, enabling the representation of non-linear relationships and improving the overall robustness and discriminatory power of the resulting quantum kernel.

Classical kernel methods, while powerful, face scalability challenges due to the computational cost of evaluating kernel matrices, which grows polynomially with the dataset size. Spectral Phase Encoding addresses this limitation by representing data as quantum states and leveraging the inherent properties of quantum computation. Specifically, quantum algorithms can perform certain linear algebra operations, such as calculating inner products required for kernel evaluation, with a potential speedup over their classical counterparts. This allows for the creation of kernel feature maps in a quantum Hilbert space, potentially enabling the processing of larger datasets and more complex feature spaces than are feasible with classical methods. The anticipated benefit lies in reducing the computational complexity from polynomial to logarithmic in certain cases, thus improving the efficiency of kernel-based machine learning algorithms.

The Inevitable Noise: Assessing Robustness in a Quantum World

Quantum computations, unlike their classical counterparts, are fundamentally vulnerable to various sources of noise during operation. These noise sources, stemming from imperfections in quantum hardware and environmental interactions, introduce errors that can corrupt the computational process and diminish the accuracy of results. Common noise types include bit-flip errors, phase-flip errors, and depolarization, all of which disrupt the delicate quantum states \ket{\psi} representing information. The severity of performance degradation is directly correlated with the level of noise present in the system; higher noise levels generally lead to increased error rates and a reduction in the fidelity of the computation. Therefore, understanding and mitigating the effects of noise is critical for building practical and reliable quantum computers.

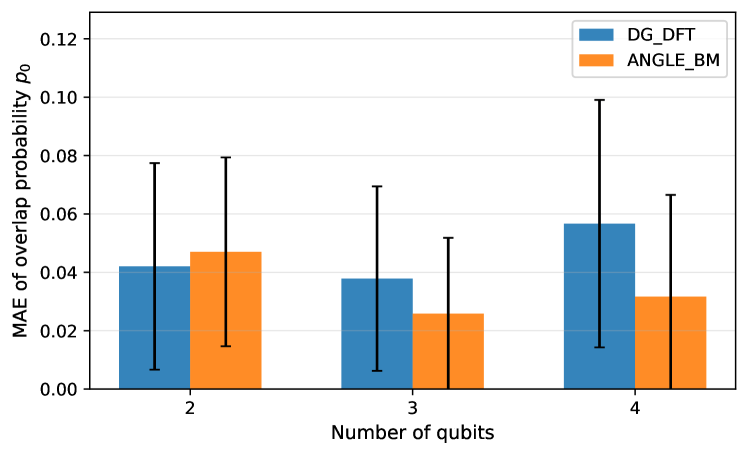

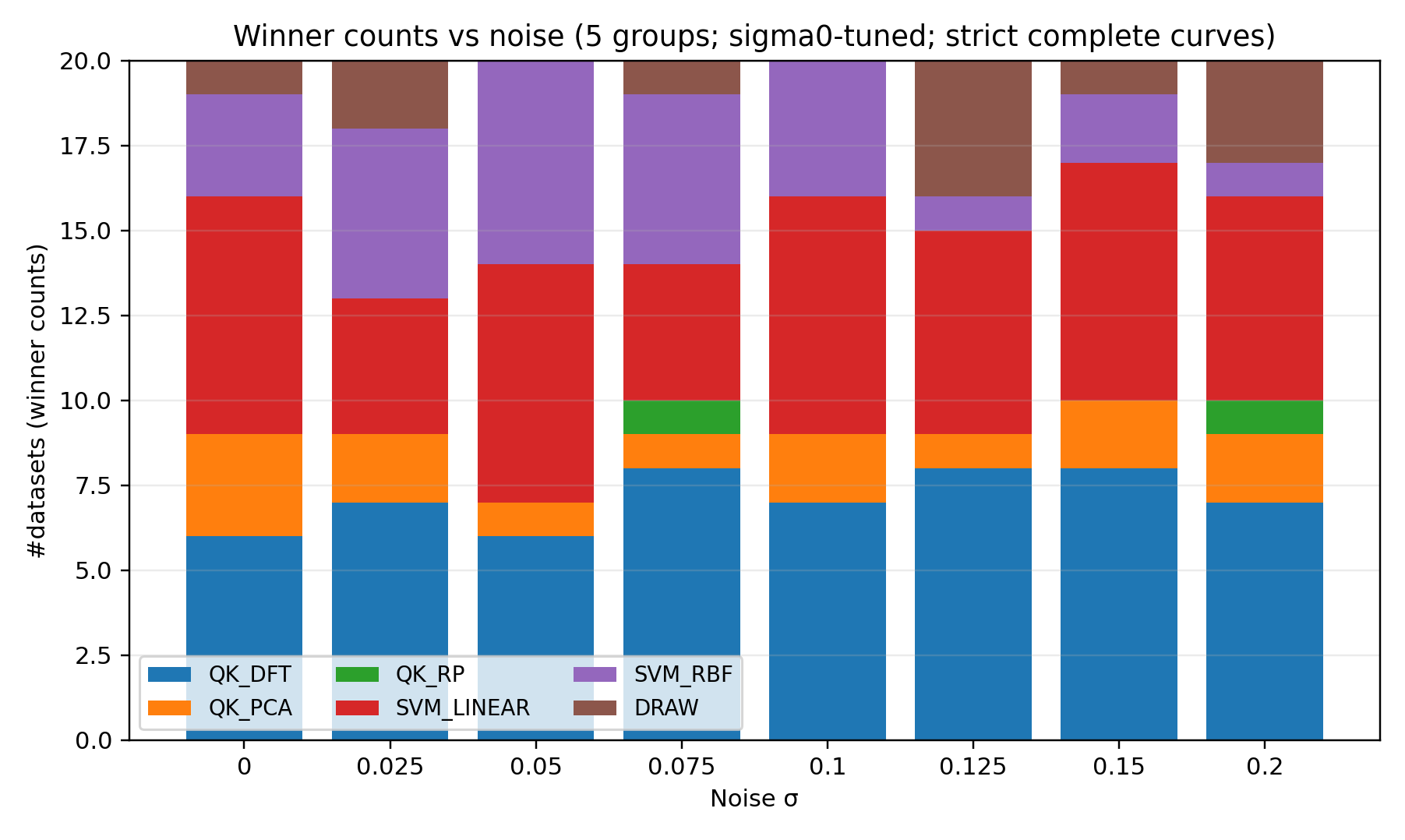

Spectral Phase Encoding exhibits superior robustness to Additive Gaussian Noise compared to other quantum constructions tested. Quantitative evaluation reveals a degradation slope of β_{QK-DFT} = -0.214, representing the rate of performance decrease as noise levels increase. This value signifies the smallest degradation observed among the evaluated quantum constructions, indicating a greater resilience to noise-induced errors and a more stable performance profile under noisy conditions. The degradation slope is a key metric for assessing the noise tolerance of quantum algorithms and constructions.

Noise-Aware Evaluation was implemented to quantify the performance of quantum constructions under varying degrees of noise. This strategy involves systematically increasing the amplitude of noise added to the quantum computations and measuring the resulting degradation in performance metrics. Specifically, noise was introduced during the quantum state preparation and measurement phases. The rate of performance decline with increasing noise – expressed as a degradation slope – was then calculated for each construction. This allows for a direct comparison of robustness, identifying constructions that maintain acceptable performance levels even in the presence of significant noise. The methodology provides a quantitative basis for assessing the practical viability of different quantum algorithms and constructions in noisy intermediate-scale quantum (NISQ) devices.

Performance validation utilized a Support Vector Machine (SVM) trained on kernels generated from both Density Functional Theory (DFT) and Principal Component Analysis (PCA) applied to quantum data. Analysis of performance degradation – measured as the slope of performance decline with increasing noise – revealed a statistically significant difference between the two kernel types. Specifically, the difference in degradation slopes, denoted as ΔQK_{PCA} - QK_{DFT} = -0.354, indicates that the QK-DFT kernel exhibits substantially greater robustness to noise compared to the QK-PCA kernel within the tested SVM framework. This difference suggests that the features extracted by DFT are more resilient to noise-induced performance loss when used in conjunction with an SVM for quantum data classification.

Beyond the Horizon: Adaptability and the Future of Quantum Kernels

The versatility of Spectral Phase Encoding extends beyond the conventional limitations of the Discrete Fourier Transform. Researchers have demonstrated that this technique, originally reliant on Fourier basis functions to map data into a quantum Hilbert space, can be successfully implemented with alternative dimensionality reduction methods. Experiments confirm functional integration with both Principal Component Analysis and Random Projections, opening possibilities for tailored quantum feature maps suited to specific datasets and computational needs. This adaptability signifies a crucial step towards broader applicability of spectral encoding, allowing for optimization based on data characteristics and potentially overcoming limitations inherent in the fixed basis of the Fourier Transform. The ability to leverage diverse dimensionality reduction techniques promises more efficient and effective quantum machine learning algorithms, expanding the scope of problems addressable with this innovative approach.

Recent investigations demonstrate the adaptability of spectral phase encoding beyond the confines of the Discrete Fourier Transform, successfully integrating it with established dimensionality reduction techniques like Principal Component Analysis and Random Projections. These experiments reveal that the core principles of spectral encoding-leveraging interference patterns to represent data-remain effective even when applied to feature spaces defined by these alternative methods. Specifically, researchers found that employing Principal Component Analysis allows for focusing on the most significant data variations, while Random Projections offer a computationally efficient means of reducing dimensionality without substantial information loss, both ultimately enhancing the performance and scalability of quantum machine learning models utilizing spectral phase encoding. This versatility suggests a pathway toward tailoring quantum algorithms to specific datasets and computational constraints, broadening the applicability of this promising technique.

Efficiently calculating kernel values is paramount for many quantum machine learning algorithms, and the Swap Test provides a remarkably effective solution. This quantum algorithm determines the overlap between two quantum states without explicitly measuring them, directly yielding the kernel value – a measure of similarity between data points in a potentially high-dimensional feature space. Instead of computationally expensive classical methods, the Swap Test leverages quantum superposition and interference to assess this overlap with a complexity that scales favorably with the dimensionality of the data. By performing a controlled-SWAP operation and then measuring the resulting state, researchers can estimate the kernel value with a precision determined by the number of repetitions of the test. This allows for the practical implementation of kernel methods, such as quantum support vector machines, even with complex datasets, significantly reducing the computational burden associated with classical kernel estimation techniques.

Despite the limitations imposed by Kernel Concentration, which can hinder the scalability of certain quantum machine learning approaches, recent research indicates promising pathways toward developing algorithms resilient to noise. Investigations reveal that Quantum Kernel-Discrete Fourier Transform (QK-DFT) exhibits notably enhanced stability in noisy environments compared to Support Vector Machines with Radial Basis Function kernels (SVM-RBF). Empirical results demonstrate this advantage quantitatively; the degradation slope of QK-DFT under increasing noise is demonstrably less steep than that of SVM-RBF, with a difference of \Delta_{SVM-RBF-QK-DFT} = -0.149. This finding suggests that QK-DFT’s inherent properties may offer a significant benefit in practical quantum machine learning applications where noise is unavoidable, potentially leading to more robust and reliable algorithms.

The pursuit of robust quantum kernels, as detailed within, echoes a fundamental truth about complex systems: fragility isn’t a design flaw, but an inherent property. This work demonstrates spectral phase encoding’s resilience against noise, a characteristic born not from striving for absolute control, but from leveraging the dataset’s intrinsic structure. As Linus Torvalds observed, “Talk is cheap. Show me the code.” This paper doesn’t simply theorize about robustness; it demonstrates it through a specific encoding method. The concentration of the kernel-a measure of its effective dimensionality-becomes less a target for optimization and more a symptom of the system finding its natural equilibrium, adjusting to the inevitable imperfections inherent in any real-world implementation.

The Shape of Things to Come

The demonstrated resilience of spectral phase encoding is not a triumph of design, but a temporary reprieve. Long stability is the sign of a hidden disaster; this method merely shifts the point of eventual failure, concentrating vulnerabilities elsewhere in the quantum ecosystem. The paper correctly identifies datasets with exploitable spectral structure as fertile ground for this approach, but it avoids the more unsettling implication: such structure is rarely intrinsic to the data itself. It arises from the limitations of the encoding process, a prophecy of how information will inevitably be lost, or worse, misinterpreted.

Future work will not focus on optimizing this particular encoding-all optimizations are temporary bandages-but on understanding the inevitability of kernel concentration. The goal isn’t to build a noise-resistant kernel, but to build systems that anticipate their own decay. A fruitful avenue lies in exploring the relationship between spectral structure, data manifold curvature, and the types of errors that are systematically encouraged by specific encoding schemes.

The true measure of progress will not be increased accuracy, but a more honest accounting of uncertainty. Systems don’t fail-they evolve into unexpected shapes. The challenge isn’t to prevent this evolution, but to learn to read the resulting forms, and to design systems that are, at least, beautifully broken.

Original article: https://arxiv.org/pdf/2602.19644.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- All Skyblazer Armor Locations in Crimson Desert

- All Shadow Armor Locations in Crimson Desert

- How to Get the Sunset Reed Armor Set and Hollow Visage Sword in Crimson Desert

- Marni Laser Helm Location & Upgrade in Crimson Desert

- Best Bows in Crimson Desert

- All Helfryn Armor Locations in Crimson Desert

- All Golden Greed Armor Locations in Crimson Desert

- How to Craft the Elegant Carmine Armor in Crimson Desert

- Keeping Large AI Models Connected Through Network Chaos

- One Piece Chapter 1179 Preview: The Real Imu Arrives in Elbaf

2026-02-24 15:50