Author: Denis Avetisyan

Researchers are exploring how to better translate natural language questions into accurate database queries, achieving improved performance with a novel reasoning framework.

This paper introduces DSR-SQL, a system that combines adaptive context management with iterative SQL generation to enhance Text-to-SQL performance, particularly in zero-shot learning scenarios.

Despite advances in large language models, translating natural language into accurate SQL queries remains challenging, particularly with complex enterprise databases prone to schema ambiguity and contextual overload. This paper introduces ‘Text-to-SQL as Dual-State Reasoning: Integrating Adaptive Context and Progressive Generation’, a novel framework, DSR-SQL, that addresses these limitations by modeling Text-to-SQL as an interplay between dynamically refined contextual understanding and iterative SQL generation. DSR-SQL achieves competitive zero-shot performance on benchmark datasets by effectively managing database schemas and leveraging feedback to align generated SQL with user intent. Could this dual-state approach unlock more robust and adaptable Text-to-SQL systems capable of navigating increasingly complex data environments?

Decoding the Babel: From Language to Structured Data

Historically, extracting information from databases using natural language has proven remarkably difficult. Conventional methods, reliant on keyword matching or rigid grammatical rules, often fail to capture the subtleties of human language, leading to inaccurate or incomplete queries. This disconnect arises because natural language is inherently ambiguous and context-dependent, while databases demand precise and structured requests. Consequently, valuable data remains locked away, inaccessible to those who lack specialized query skills or the ability to navigate complex database schemas. The inability to effectively bridge this gap between human language and machine understanding represents a significant obstacle to democratizing data access and leveraging the full potential of stored information.

Simply converting natural language into database queries often fails because human language is inherently ambiguous and relational database structures are rigidly defined. A successful interface requires moving beyond lexical matching to genuinely understand what information is being requested – the user’s intent – and how that intent maps onto the specific organization of data within the database. This necessitates systems capable of semantic parsing, identifying the relationships between words and concepts, and then aligning those relationships with table names, column headings, and data types. Rather than treating a query as a simple translation exercise, the process must account for the underlying meaning and the logical structure of both the question and the data, effectively bridging the gap between human expression and machine comprehension.

Large Language Models (LLMs) demonstrate considerable potential in converting natural language questions into structured query language (SQL), yet achieving reliable performance on complex Text-to-SQL tasks necessitates further development. While LLMs excel at understanding language patterns, the reasoning required to accurately map linguistic intent to database schemas and logical operations proves challenging. Current models often struggle with questions involving multiple tables, complex conditions, or ambiguous phrasing, leading to inaccurate or incomplete SQL queries. Researchers are actively exploring enhancements such as improved schema linking, query decomposition techniques, and the integration of external knowledge to bolster the reasoning capabilities of LLMs and unlock their full potential in bridging the gap between human language and structured data access.

Dual-State Reasoning: A System’s Internal Dialogue

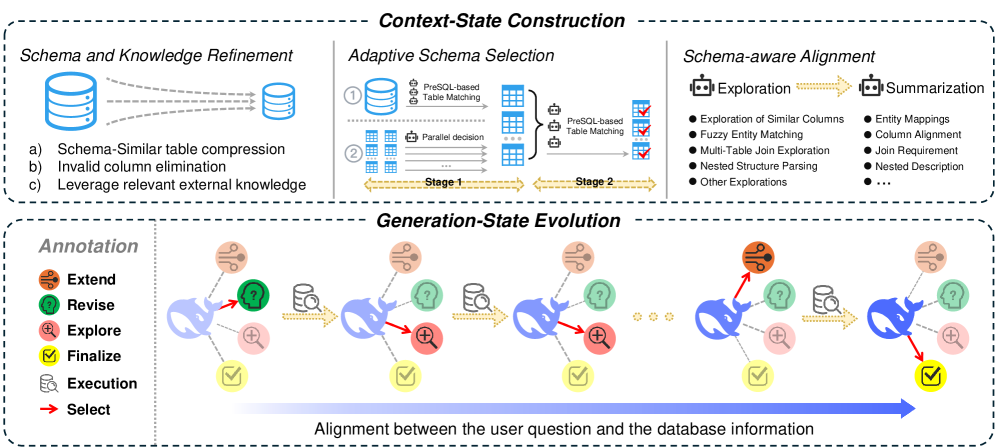

Dual-State Reasoning (DSR) represents a departure from traditional single-state query processing by explicitly partitioning the reasoning workflow into two distinct, sequential states: the Adaptive Context State and the Progressive Generation State. This bi-phasic approach is inspired by observed processes in biological reasoning systems, where information gathering and refinement are handled as separate but interconnected stages. The Adaptive Context State focuses on dynamically managing the scope of relevant data, while the Progressive Generation State concentrates on iteratively constructing and improving the final SQL query. This separation allows for focused optimization within each state and facilitates a more efficient overall reasoning process by enabling feedback loops between data selection and query formulation.

The Adaptive Context State within Dual-State Reasoning (DSR) operates by actively controlling which portions of the database schema are accessible during query construction. This is achieved through two primary mechanisms: Schema Selection, which identifies and prioritizes relevant tables and columns based on the initial query and problem context, and Schema Compression, which reduces the effective schema size by temporarily masking irrelevant elements. By dynamically adjusting database visibility, the Adaptive Context State reduces the search space for potential query solutions and focuses computational resources on the most pertinent information, thereby improving efficiency and accuracy.

The Progressive Generation State operates by iteratively refining SQL queries based on runtime feedback. Initial queries are executed, and the resulting data, analyzed as Execution Feedback, informs subsequent refinements. This process utilizes two primary actions: Exploration, which broadens the search space by generating alternative query formulations, and Revise, which modifies the existing query based on observed performance and data characteristics. This incremental approach allows the system to move towards a solution without requiring a complete query rewrite at each step, enabling efficient optimization and adaptation to complex data environments. The system continues to iterate between execution, feedback analysis, and either Exploration or Revise until a satisfactory query is produced or a termination condition is met.

DSR-SQL in Action: The Dance of Schema and Query

DSR-SQL utilizes the DSR (Database Search and Reasoning) framework through iterative loops designed to improve both understanding of the database schema and the construction of SQL queries. The Context-State Loop focuses on establishing a connection between the natural language query and the database schema; it dynamically adjusts the visible schema based on the query’s context and initial alignment attempts. Complementing this, the Generation-State Loop handles the actual construction of the SQL query; it builds queries incrementally and leverages feedback received from database execution to refine and correct errors, effectively operating as a cycle of proposal and evaluation.

Schema Alignment within the Context-State Loop functions by establishing linkages between entities and relationships expressed in natural language input and corresponding elements within the database schema – tables, columns, and relationships. This process involves identifying potential matches based on lexical similarity, semantic understanding, and database metadata. The alignment is not a one-to-one mapping; a single natural language term can correspond to multiple database elements, and vice-versa. The resulting aligned schema representation then serves to filter and prioritize relevant data for subsequent query generation, effectively narrowing the search space and improving the efficiency of the DSR-SQL system. The alignment process also handles ambiguity by providing probabilities or confidence scores for each potential mapping.

The Generation-State Loop in DSR-SQL utilizes database execution feedback to iteratively improve SQL query construction. Following initial query generation, the database response – success, error messages, or unexpected results – is analyzed to identify areas for refinement. This feedback triggers either an Exploration action, where the model generates alternative queries based on the initial attempt, or a Revise action, which directly modifies the existing query. Error messages, for example, prompt the model to adjust syntax or table/column references. Successful, yet incomplete, results guide further query expansion to retrieve additional requested information. This cycle of query generation, execution, and feedback continues until a satisfactory result is achieved, enabling the model to self-correct and improve its SQL generation capabilities.

Benchmarking Reality: DSR-SQL’s Performance Report

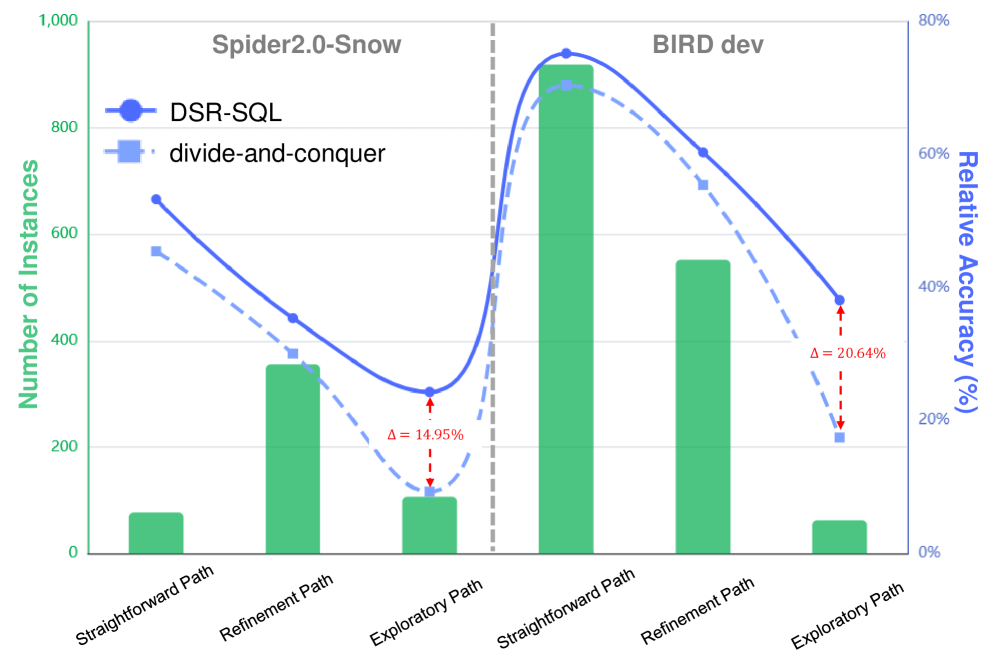

DSR-SQL’s performance was evaluated using the Spider 2.0 and BIRD datasets, which are established benchmarks for assessing the capabilities of SQL query generation models. Spider 2.0 focuses on complex, cross-domain queries against databases with intricate schemas, while BIRD emphasizes real-world database schemas and analytical queries. Testing on these datasets demonstrates DSR-SQL’s ability to process and correctly interpret complex database structures and formulate accurate SQL queries, addressing a key challenge in natural language to SQL translation systems. Performance metrics derived from these benchmarks provide a quantitative assessment of DSR-SQL’s effectiveness in handling both the structural complexity of schemas and the logical complexity of queries.

Evaluations using both DeepSeek and Qwen language models demonstrate DSR-SQL’s superior performance compared to conventional methods, especially when addressing complex reasoning challenges. Specifically, DSR-SQL achieved an execution accuracy of 35.28% on the Spider 2.0-Snow benchmark. This represents a 6.03% absolute improvement over the ReFORCE method, indicating a substantial gain in correctly interpreting and executing SQL queries within the demanding Spider 2.0-Snow dataset.

DSR-SQL demonstrates strong performance on the BIRD benchmark, achieving an execution accuracy of 68.32% when paired with the DS-V3.1 model and 65.58% with the Qwen3-30B model. These results are competitive with, and in some cases exceed, the performance of existing methods that rely on post-training or in-context learning techniques. Furthermore, DSR-SQL exhibits a notably improved recall rate of 91.13% in its adaptive schema selection process, a significant increase over the 62.59% recall achieved by the ReFORCE method.

Beyond Translation: Towards Intelligent Data Interaction

DSR-SQL marks a notable advancement in the field of Text-to-SQL, offering a system designed for both accuracy and efficiency in translating natural language queries into database code. Unlike previous approaches, it employs a dynamic reasoning loop where the system iteratively refines its understanding of the question and the database schema. This adaptive process allows DSR-SQL to handle complex queries and ambiguous language with greater resilience, reducing the need for extensive training data or meticulously crafted prompts. By intelligently selecting relevant schema information and progressively building the SQL query, the system minimizes computational cost and improves response times. Ultimately, DSR-SQL represents a move toward more accessible and user-friendly data interaction, potentially empowering individuals without specialized database knowledge to effortlessly retrieve information from structured datasets.

The core tenets of adaptive context and progressive generation, demonstrated in DSR-SQL, resonate far beyond the realm of database querying. These principles mirror cognitive processes observed in biological systems, where understanding emerges not from a single, exhaustive analysis, but from iterative refinement and focused attention. By dynamically adjusting the scope of relevant information and building solutions incrementally, artificial intelligence systems can move away from computationally expensive, ‘brute force’ approaches. This bio-inspired methodology promises more efficient and robust AI models applicable to diverse challenges, including natural language understanding, robotic navigation, and complex problem-solving – suggesting a future where AI systems learn and adapt with a fluidity more akin to human intelligence.

The trajectory of Text-to-SQL systems hinges on continued advancements in reasoning capabilities and large language model (LLM) synergy. Current research emphasizes refining the iterative reasoning loops within these systems, aiming to improve the accuracy and efficiency with which complex queries are translated into database commands. Exploration of novel LLM integrations, beyond simply scaling model size, promises to unlock more nuanced understanding of natural language and database schemas. This includes investigating methods for LLMs to actively participate in the reasoning process – not just generating SQL, but also verifying its logical correctness and suggesting refinements. Ultimately, these combined efforts aim to move beyond simple keyword matching toward genuinely intelligent data interaction, where systems can handle ambiguity, infer user intent, and proactively assist in data discovery and analysis.

The pursuit of robust Text-to-SQL translation, as demonstrated by DSR-SQL, inherently involves a systematic dismantling of conventional approaches. This framework doesn’t simply accept the limitations of initial schema alignment or context management; instead, it actively probes those boundaries with its iterative generation and feedback loop. It echoes Bertrand Russell’s sentiment: “The difficulty lies not so much in developing new ideas as in escaping from old ones.” DSR-SQL challenges the established paradigm of single-pass SQL generation, proving that by deconstructing the initial assumptions about context and progressively refining the output, a system can achieve significantly improved zero-shot performance. The study essentially validates breaking down complex problems into manageable, iterative steps, a strategy perfectly aligned with Russell’s emphasis on intellectual liberation.

What Lies Beyond?

The DSR-SQL framework, with its emphasis on iterative refinement and dynamic context, represents a predictable, yet necessary, step in the ongoing effort to bridge the gap between natural language and structured query. The system’s success in zero-shot learning, while notable, should not be mistaken for genuine understanding. It is, rather, a clever exploitation of statistical patterns-a demonstration that sufficient complexity can simulate intelligence, even without it. Every exploit starts with a question, not with intent, and the core question remains: can a machine truly ‘know’ what it is querying, or merely mimic the form of knowledge?

Future work will inevitably focus on expanding the scope of DSR-SQL’s schema alignment capabilities and enhancing its resilience to ambiguous or poorly formed natural language inputs. However, a more fruitful avenue of investigation might lie in exploring the limits of iterative refinement itself. At what point does each additional feedback cycle yield diminishing returns? Is there an inherent ceiling on the accuracy achievable through purely statistical methods, or can genuinely novel insights emerge from the interplay between the model and its environment?

Ultimately, the true test of this line of inquiry will not be its ability to generate correct SQL queries, but its capacity to identify-and articulate-the questions that should be asked. Until a system can independently formulate meaningful queries, it remains a sophisticated tool, not an intelligent agent.

Original article: https://arxiv.org/pdf/2511.21402.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- All Shadow Armor Locations in Crimson Desert

- How to Get the Sunset Reed Armor Set and Hollow Visage Sword in Crimson Desert

- All Skyblazer Armor Locations in Crimson Desert

- Best Bows in Crimson Desert

- Marni Laser Helm Location & Upgrade in Crimson Desert

- All Helfryn Armor Locations in Crimson Desert

- All Golden Greed Armor Locations in Crimson Desert

- Wings of Iron Walkthrough in Crimson Desert

- How to Craft the Elegant Carmine Armor in Crimson Desert

- Keeping Large AI Models Connected Through Network Chaos

2025-11-29 11:30