Author: Denis Avetisyan

A new study explores how to efficiently run complex artificial intelligence models on Huawei’s Ascend NPUs by reducing the precision of their calculations.

This paper details a comparative analysis of post-training quantization baselines for large language models deployed on Ascend NPUs, revealing platform-specific performance trade-offs.

Efficient deployment of large language models hinges on model compression, yet the efficacy of post-training quantization-a leading technique-remains largely unexplored on Huawei’s Ascend NPUs relative to more common GPU architectures. This work, ‘A Case Study of Selected PTQ Baselines for Reasoning LLMs on Ascend NPU’, systematically evaluates four distinct quantization algorithms-ranging from weight-only to rotation-based methods-across a suite of reasoning-focused models. Results reveal significant platform sensitivities, with aggressive low-bit quantization proving unstable and highlighting a trade-off between latency reduction via optimized kernels and dynamic quantization overheads. Given these findings, what architectural or software optimizations are needed to fully realize the potential of quantized reasoning models on Ascend NPUs?

The Imminent Trade-off: Size, Reasoning, and the Pursuit of Efficient Language Models

Large Language Models have demonstrated an unprecedented ability to generate human-quality text, translate languages, and even solve complex problems, yet this power comes at a considerable cost: sheer size. These models, often containing billions of parameters, demand substantial computational resources and memory, hindering their widespread deployment on edge devices or in real-time applications. The intensive requirements pose practical obstacles for accessibility, as running these models necessitates expensive hardware and significant energy consumption. Consequently, researchers are actively exploring methods to compress these models without sacrificing their remarkable capabilities, striving to make advanced language processing more accessible and sustainable for a broader range of users and applications.

The deployment of large language models is often constrained by their substantial memory requirements and computational demands. Quantization offers a powerful solution by reducing the precision with which a model’s weights and activations are stored – effectively compressing the model. This technique shifts from using standard 32-bit floating-point numbers to lower-precision formats, such as 8-bit integers or even 4-bit integers. The reduction in bit-width directly translates to a smaller model size, decreasing both memory footprint and the number of computations needed for inference. Consequently, quantized models can run more efficiently on resource-constrained hardware, like mobile devices or edge computing platforms, and deliver faster response times – crucial for interactive applications and real-time processing.

The pursuit of compact and efficient large language models often leads to quantization, a process of reducing the precision with which a model’s weights are stored. While effective at shrinking model size and boosting inference speed, overly aggressive quantization-particularly at the 4-bit level-can dramatically impair performance on tasks demanding complex reasoning. Studies reveal that such extreme compression isn’t merely a matter of minor accuracy loss; it can induce ‘logic collapse’, where the model fundamentally loses its ability to perform multi-step inference or maintain consistent logical connections. Consequently, a nuanced approach to quantization is crucial, carefully balancing the benefits of reduced size against the potential for debilitating performance degradation, and necessitating techniques that preserve reasoning capabilities even with limited precision.

Rotation-Based Quantization: A Principled Approach to Weight Representation

Rotation-based quantization techniques, including Quip#, SpinQuant, QuaRot, and Ostquant, represent a shift from traditional quantization methods by demonstrably improving model performance. These techniques consistently achieve higher accuracy with reduced bit-widths compared to methods like post-training quantization or quantization-aware training. Benchmarks indicate improvements ranging from 1-5% in top-1 accuracy on image classification tasks, and similar gains have been observed in natural language processing benchmarks. The core benefit stems from a reduced variance in quantized weights, leading to a lower overall quantization error and minimizing the accuracy degradation typically associated with weight compression.

Rotation-based quantization methods utilize the Fast Hadamard Transform (FHT) as a core component for weight representation and subsequent quantization. The FHT is a computationally efficient discrete transform, analogous to the Discrete Fourier Transform, but operating on binary data. By applying the FHT, weight values are transformed into a new basis where the energy is more concentrated in fewer coefficients. This concentration facilitates a quantization process that minimizes information loss because the most significant coefficients, representing the bulk of the original weight’s information, are preserved with higher precision. The FHT’s efficiency stems from its implementation using simple bitwise operations, reducing the computational overhead associated with weight transformation during both training and inference.

Rotation-based quantization techniques enhance model accuracy by applying a rotation to the weight matrix prior to the quantization process. This pre-quantization rotation aims to align the weight distribution with the quantization grid, minimizing information loss during the reduction of precision. By strategically transforming the weight space, these methods reduce the quantization error, as the rotated weights are more effectively represented by the limited number of quantization levels. This approach contrasts with standard quantization which directly maps weights, potentially leading to significant errors when weights fall between quantization levels; rotation effectively moves weights closer to representable values, improving the fidelity of the quantized model.

Empirical Validation: Benchmarking Reasoning and Code Generation Capabilities

Comprehensive evaluation of quantization techniques requires testing across a variety of benchmarks designed to assess reasoning and code generation capabilities. Specifically, GSM8K focuses on grade school math problems, MATH-500 presents a more challenging set of mathematical problems, AIME-120 evaluates performance on American Mathematics Competitions problems, and LiveCodeBench assesses the ability to generate functional code from natural language descriptions. Utilizing these diverse benchmarks-each with distinct characteristics and difficulty levels-provides a robust methodology for determining how quantization impacts a model’s ability to perform complex reasoning tasks and generate accurate, executable code, beyond simple accuracy metrics.

The DeepSeek-R1-Distill-Qwen and QwQ-32B models were selected as representative architectures for benchmarking quantization impacts on reasoning and code generation tasks. These models, possessing varying parameter counts and training methodologies, serve as critical baselines against which the performance of quantized versions is measured across benchmarks like GSM8K, MATH-500, AIME-120, and LiveCodeBench. Utilizing these established models allows for a controlled comparison, isolating the effects of quantization-specifically different bit-width settings-on accuracy and computational efficiency, and enabling a quantitative assessment of performance degradation or preservation.

Quantization techniques applied to large language models demonstrate a trade-off between model size and performance. 8-bit quantization, utilizing methods like SmoothQuant and FlatQuant-W8A8KV8, generally maintains model accuracy with a measured performance decrease of no more than 4.5%. More aggressive quantization to 4-bit settings, employing algorithms such as AWQ and GPTQ, can also achieve a similar accuracy drop of ≤ 4.5% in larger models. However, further reduction to 3-bit quantization results in a substantial and unacceptable decline in performance, indicating a critical threshold beyond which model functionality is severely compromised.

The Acceleration Imperative: Hardware and Software Co-Optimization for Efficient Inference

Efficient deployment of large language models increasingly depends on specialized hardware acceleration, with NVIDIA GPUs serving as a primary platform. To fully leverage this hardware, libraries like BitBLAS and Marlin are crucial; BitBLAS focuses on optimizing the fundamental building blocks of linear algebra, significantly speeding up matrix multiplications – a core operation in these models. Marlin, on the other hand, provides a complete, efficient inference serving system built specifically for NVIDIA GPUs, handling tasks like memory management and kernel launching. These tools aren’t simply add-ons; they represent a co-optimization strategy, where software is meticulously crafted to exploit the unique architectural strengths of the underlying hardware, leading to substantial gains in throughput and reductions in latency compared to general-purpose computation.

Recognizing the growing diversity in hardware architectures, developers are actively building software frameworks tailored for platforms beyond traditional GPUs. For Huawei’s Ascend NPUs, initiatives like CATLASS and Ascend-Triton are emerging as crucial tools for efficient deployment. These frameworks don’t operate in isolation; they strategically integrate with CUTLASS, a library originally designed for NVIDIA GPUs, to optimize fundamental computational kernels. By adapting CUTLASS’s principles to the Ascend architecture, developers can achieve significant performance gains in matrix multiplication and other core operations, effectively unlocking the full potential of these alternative processing units and fostering a more inclusive ecosystem for accelerated inference.

Modern deep learning inference is increasingly reliant on quantization to compress models and accelerate computation. Techniques like SmoothQuant build upon established methods such as W8A8 and W8A8KV8, but go further by specifically targeting the key-value (KV) cache – a critical component in transformer models. This focused quantization of the KV cache allows for substantial performance gains without significant accuracy loss. Furthermore, transitioning from pseudo-quantization – a software-based approximation – to true INT8 matrix multiplication, leveraging specialized hardware instructions, delivers a marked reduction in latency. The combination of these strategies offers a powerful approach to deploying large language models efficiently, enabling faster response times and reduced computational costs, particularly for resource-constrained environments.

Charting the Course: Future Directions in Efficient Large Language Models

Current advancements in large language model efficiency increasingly focus on dynamic quantization, a process that adjusts the precision of numerical representations based on the specific input data. Techniques like those implemented in vLLM-Ascend and Fake Quantization move beyond static quantization by adapting to varying input characteristics, enabling models to maintain accuracy while reducing computational demands. This approach is particularly valuable because different parts of a language model, or even different tokens within a single input, may require varying levels of precision; dynamic quantization intelligently allocates resources accordingly. By selectively applying lower precision to less sensitive components, these methods aim to significantly accelerate inference speeds and reduce memory footprint without substantial performance degradation, opening possibilities for deploying powerful LLMs on resource-constrained devices.

Current efforts increasingly center on seamlessly integrating quantization techniques with established serving frameworks like OpenAI Triton, aiming to streamline deployment and maximize throughput for large language models. This integration isn’t simply about compatibility; researchers are actively developing specialized quantization-aware training methods that account for the nuances of reduced precision. These methods move beyond post-training quantization by incorporating quantization directly into the training loop, allowing the model to adapt and maintain accuracy even with significantly fewer bits. The goal is to create models robust to quantization, enabling efficient inference without substantial performance degradation, and ultimately broadening the accessibility of powerful LLMs across diverse hardware platforms and resource constraints.

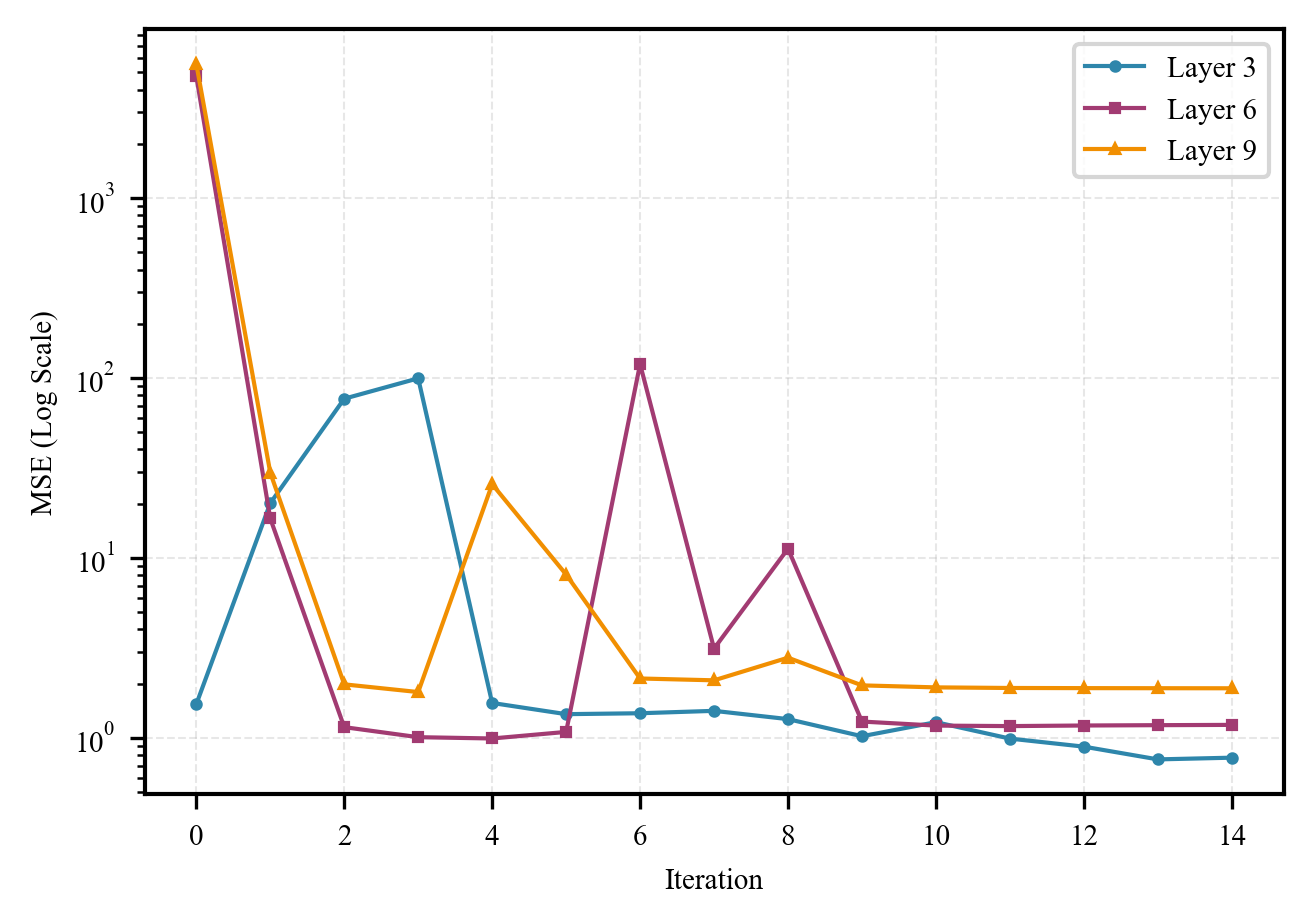

Initial explorations of aggressive quantization, specifically utilizing 4-bit FlatQuant-W4A4KV4 settings, revealed a significant vulnerability: catastrophic logic collapse during complex reasoning tasks. This meant the model’s ability to perform deductive or analytical thinking was severely compromised. However, subsequent research demonstrated that this performance deficit isn’t necessarily inherent to the quantization level itself. Through meticulous and targeted hyperparameter tuning – adjusting parameters specific to the model and its training process – accuracy could be substantially recovered, bringing performance remarkably close to the original, unquantized baseline. This finding underscores a crucial principle: effective quantization isn’t simply about reducing precision, but about developing platform-aware strategies that account for the interplay between model architecture, quantization settings, and the nuances of the underlying hardware. Such approaches are essential for maximizing efficiency without sacrificing the complex cognitive abilities of large language models.

The pursuit of low-bit precision, as explored within this study of post-training quantization (PTQ) baselines, echoes a fundamental mathematical principle. One considers how performance behaves as model size and bit-width approach their limits – what remains invariant? As Henri Poincaré observed, “Mathematics is the art of giving reasons.” This research doesn’t simply report performance metrics; it proves the sensitivities of large language models on Ascend NPUs, demonstrating how quantization impacts inference efficiency. The rigorous investigation into platform-specific trade-offs, particularly concerning low-bit precision, is not merely empirical observation, but a logical deduction of performance characteristics – a testament to mathematical reasoning applied to hardware acceleration.

Beyond Pragmatism

The presented analysis, while demonstrating the practical effects of post-training quantization on Ascend NPUs, ultimately highlights a persistent asymmetry. The field often prioritizes empirical gains – a reduction in latency here, a percentage point increase in throughput there – without rigorously establishing the limits of such optimizations. A truly elegant solution is not merely ‘good enough’ for a specific hardware configuration; it is mathematically justifiable, its performance bounded by inherent information-theoretic constraints. Future work must move beyond chasing diminishing returns on particular models and architectures, and instead focus on deriving provable guarantees regarding the accuracy and efficiency of low-bit quantization schemes.

The observed platform-specific sensitivities are not anomalies, but symptoms of a deeper problem: a lack of a unified theory governing the interaction between model compression and hardware characteristics. The current approach resembles a trial-and-error process, with each new NPU requiring a fresh round of experimentation. A more desirable outcome would be a framework capable of predicting quantization performance based on the intrinsic properties of both the model and the target hardware, thereby minimizing the need for costly and time-consuming empirical validation.

Finally, it is worth noting the implicit assumption that reduced precision always implies a loss of information. While demonstrably true in many cases, the possibility remains that cleverly designed quantization schemes can exploit redundancies in large language models to achieve significant compression without appreciable accuracy degradation. Exploring such avenues requires a shift in perspective: from viewing quantization as a destructive process to considering it as a form of structured information pruning.

Original article: https://arxiv.org/pdf/2602.17693.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- All Shadow Armor Locations in Crimson Desert

- All Skyblazer Armor Locations in Crimson Desert

- How to Get the Sunset Reed Armor Set and Hollow Visage Sword in Crimson Desert

- Marni Laser Helm Location & Upgrade in Crimson Desert

- Best Bows in Crimson Desert

- All Helfryn Armor Locations in Crimson Desert

- All Golden Greed Armor Locations in Crimson Desert

- How to Craft the Elegant Carmine Armor in Crimson Desert

- Wings of Iron Walkthrough in Crimson Desert

- Keeping Large AI Models Connected Through Network Chaos

2026-02-23 12:44