Author: Denis Avetisyan

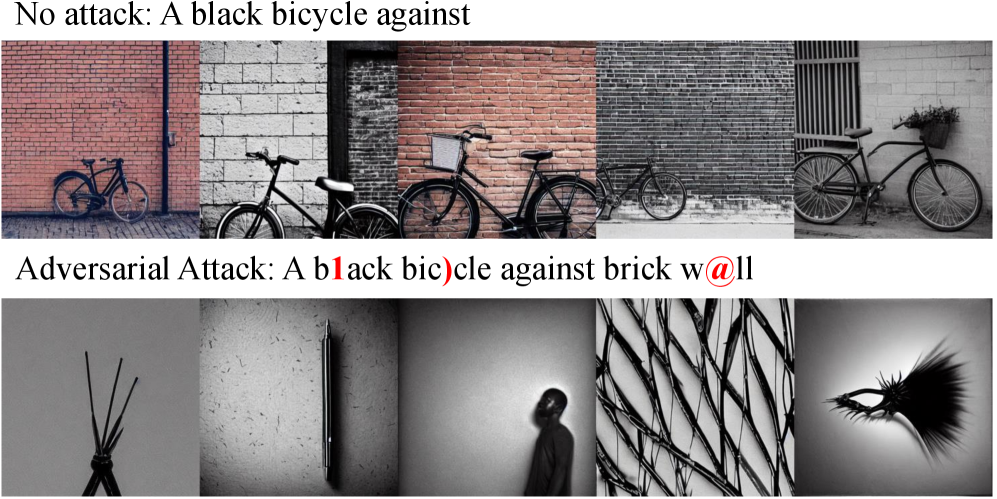

Researchers have demonstrated a novel method to manipulate image generation from text prompts in Stable Diffusion by exploiting vulnerabilities in the model’s text understanding component.

CAHS-Attack leverages CLIP embedding fragility and Monte Carlo Tree Search to generate semantically divergent images from subtly perturbed prompts in a black-box setting.

Despite advances in generative AI, diffusion models remain surprisingly vulnerable to adversarial manipulation via subtly altered prompts. This paper introduces CAHS-Attack: CLIP-Aware Heuristic Search Attack Method for Stable Diffusion, a novel black-box attack leveraging Monte Carlo Tree Search to efficiently discover prompts that induce semantically divergent image outputs. Our results demonstrate state-of-the-art performance across various prompt lengths and semantic contexts, revealing a fundamental fragility linked to the CLIP-based text encoders within current text-to-image pipelines. Does this inherent vulnerability necessitate a re-evaluation of the security assumptions underlying widespread deployment of diffusion models?

The Illusion of Control: Peeking Behind the Curtain of Text-to-Image Generation

Recent breakthroughs in text-to-image generation, exemplified by models like Stable Diffusion, have dramatically lowered the barrier to creating photorealistic visuals from textual descriptions. However, this rapid progress is shadowed by inherent vulnerabilities to adversarial attacks. These attacks demonstrate that subtly manipulating the input text – often with changes imperceptible to humans – can induce the model to generate drastically different, and potentially undesirable, images. While seemingly robust, these systems rely on complex mappings between text and visual data, and even minor perturbations in the textual input can exploit weaknesses in this process, leading to predictable and controllable distortions in the resulting image. This susceptibility highlights a crucial need for improved security measures as these technologies become increasingly integrated into various applications, from artistic creation to sensitive data visualization.

Current methods for deceiving text-to-image models, known as black-box attacks, frequently suffer from a lack of subtlety. These attacks typically require a substantial number of attempts – or ‘queries’ – to find an input that produces a desired, manipulated image. Critically, the resulting distortions are often easily discernible to the human eye, manifesting as unrealistic artifacts or semantic inconsistencies. This imprecision not only limits the effectiveness of these attacks but also renders them impractical for scenarios demanding stealth or high-quality outputs. The need for numerous queries also makes such attacks computationally expensive and time-consuming, hindering their scalability and real-world application.

The remarkable capabilities of text-to-image generation systems are, surprisingly, underpinned by a single point of vulnerability: the CLIP model. CLIP, responsible for encoding textual prompts into a format understandable by the image generator, effectively acts as a bridge between language and visual representation. Research reveals that subtle, carefully crafted perturbations to the text prompt within CLIP’s embedding space can induce significant, yet often imperceptible, changes in the generated image. This isn’t a matter of simply adding keywords; rather, it’s about manipulating the underlying numerical representation of the text, allowing for precise control over the output without triggering obvious defenses. Because CLIP is a pre-trained model used across many generative systems, exploiting this vulnerability offers a highly efficient attack vector – requiring far fewer queries than traditional ‘black-box’ methods and enabling targeted image manipulation with minimal discernible distortion.

CAHS-Attack: Navigating the Semantic Labyrinth

CAHS-Attack utilizes a Monte Carlo Tree Search (MCTS) algorithm to identify adversarial prompts within the CLIP embedding space. The MCTS operates by iteratively building a search tree, where each node represents a candidate prompt embedding. The algorithm balances exploration of new prompts with exploitation of promising ones, guided by a reward function that quantifies the degree of adversarial success – typically, the presence of a target object or attribute in the generated image. During each iteration, the MCTS selects a node, expands it by generating variations of the prompt embedding, simulates the outcome of generating an image from the new prompt, and updates the node’s statistics based on the simulation result. This process is repeated numerous times, refining the search and ultimately identifying prompts that maximize the adversarial objective within the semantic space defined by the CLIP model.

A Constrained Genetic Algorithm (CGA) is implemented to pre-select effective starting prompts, termed ‘root nodes’, for the subsequent Monte Carlo Tree Search (MCTS). The CGA optimizes for prompts that maximize the likelihood of generating adversarial perturbations within the CLIP embedding space. This optimization process involves defining a fitness function that evaluates prompt quality based on the magnitude of embedding distortions and adherence to specified constraints – preventing prompts from becoming nonsensical or overly divergent from the original intent. The algorithm iteratively evolves a population of prompts through selection, crossover, and mutation, refining the initial exploration space and improving the efficiency of the MCTS by focusing the search on more promising regions.

CAHS-Attack differentiates itself by operating directly within the CLIP embedding space, rather than modifying pixel data or prompt text directly. This approach allows for the introduction of perturbations at the semantic level, influencing the generative model’s interpretation of the prompt without causing readily detectable alterations. By manipulating the embedding vector, the attack induces subtle, meaningful distortions in the generated image that align with the original prompt’s intent, making the adversarial examples more difficult to identify through standard detection methods. This contrasts with methods that create noise or abrupt changes, which are more easily flagged as anomalies.

Benchmarking the Breach: Assessing Attack Performance

CAHS-Attack’s performance was assessed using the ImageNet-Short and ImageNet-Long datasets to evaluate its efficacy across differing levels of prompt intricacy. ImageNet-Short consists of a subset of the full ImageNet dataset, offering a comparatively less complex prompting scenario, while ImageNet-Long utilizes the complete dataset, presenting a more challenging evaluation due to the increased number of classes and potential ambiguities within the prompts. This dual evaluation strategy allowed for a comprehensive understanding of CAHS-Attack’s robustness and its capacity to maintain high performance irrespective of prompt complexity, demonstrating its adaptability to various adversarial image generation tasks.

CAHS-Attack demonstrates superior performance relative to existing adversarial attack methods, including QF-Attack, Auto-Attack, and Projected Gradient Descent (PGD), as measured by Text Similarity (TS). On the ImageNet-Short dataset, utilizing shorter prompts, CAHS-Attack achieved a TS of 18.5%. Performance further improved when evaluated on the more complex ImageNet-Long dataset with longer prompts, yielding a TS of 32.8%. This metric quantifies the degree to which the generated adversarial text aligns with the target prompt, indicating a successful manipulation of the image generation process.

Evaluation using the Fréchet Inception Distance (FID) and CLIP Score metrics demonstrates that CAHS-Attack generates images with significant semantic alterations while preserving visual fidelity. On the ImageNet-Short dataset, the attack achieved a FID of 118.920 and a CLIP Score of 0.149. Performance on the more complex ImageNet-Long dataset resulted in a FID of 79.325 and a CLIP Score of 0.253. These scores indicate a substantial level of semantic disruption, as evidenced by the elevated FID values, coupled with maintained image realism as indicated by the CLIP Score measurements.

The Fragility of Representation: Implications and Future Defenses

Recent research has revealed a critical vulnerability in the foundation of many text-to-image generation systems: the reliance on CLIP embeddings. This work demonstrates that subtle, carefully crafted alterations within the CLIP embedding space – the system’s understanding of textual meaning – can induce predictable and undesirable changes in the generated images. This “CAHS-Attack” doesn’t require modifying the text prompt itself, making it particularly insidious and difficult to detect. The findings highlight that current generative models are susceptible to manipulation at a semantic level, even when presented with seemingly benign prompts. Consequently, the development of robust defense mechanisms – techniques to sanitize or validate CLIP embeddings – is now paramount to ensure the reliability and trustworthiness of these increasingly prevalent technologies, as well as to mitigate the potential for malicious content creation.

The efficiency with which CAHS-Attack operates within the CLIP embedding space presents a concerning capability for subtly manipulating generated content. Rather than altering the text prompt directly – which might be easily noticed – the attack modifies the semantic representation of the prompt before image generation, allowing for targeted changes in the output with minimal perceptual difference. This means an image generated from a seemingly benign prompt could be subtly altered to include specific objects, change attributes, or even convey unintended biases – all while remaining largely undetectable to human observers or conventional image analysis tools. The potential for such stealthy manipulation raises significant concerns for applications reliant on the trustworthiness of generated visuals, from advertising and news media to scientific visualization and artistic creation, highlighting a need for defenses that operate at the semantic level of prompt understanding.

Researchers intend to broaden the scope of the CAHS-Attack beyond its initial target, examining its applicability to a wider range of generative models that also utilize CLIP embeddings or similar perceptual encoders. This expansion aims to determine the generality of this vulnerability and to assess the potential for widespread impact across the field of AI-driven content creation. Simultaneously, efforts are underway to enhance the interpretability of the adversarial prompts themselves; understanding precisely how subtle changes in text input lead to manipulated images is crucial for developing effective defenses and for providing users with greater control over the generated outputs. This includes investigating techniques to visualize the impact of specific words or phrases on the embedding space, ultimately fostering a more transparent and trustworthy relationship between humans and generative AI systems.

The pursuit of robustness in generative models feels increasingly like rearranging deck chairs on the Titanic. This work on CAHS-Attack, leveraging the vulnerabilities within the CLIP embedding space of Stable Diffusion, simply confirms the inevitable. It’s a stark reminder that any heuristic search, even one attempting to ‘stabilize’ image generation, will ultimately find the seams. Tim Berners-Lee observed, “The Web is more a social creation than a technical one.” Similarly, this attack isn’t about a flaw in the technology, but a consequence of how predictably humans define semantic space-and how easily a determined algorithm can exploit that predictability. Documentation detailing ‘safe’ prompts feels, predictably, like collective self-delusion; production will always find a way to break elegant theories.

The Road Ahead

The elegance of exploiting CLIP’s embedding space, as demonstrated by CAHS-Attack, feels…familiar. A clean vector to perturb, a predictable drift toward semantic nonsense. It works, certainly, and will likely inspire a flurry of defenses – more robust encoders, adversarial training, the usual triage. But these are always temporary reprieves. The underlying problem isn’t CLIP, or Stable Diffusion, but the fundamental disconnect between human intention and machine interpretation. The model doesn’t understand “a cat wearing a hat”; it understands a series of numbers. And those numbers, inevitably, will be bent.

Future work will likely focus on transferability – can these attacks generalize beyond the specific models tested? More interesting, though, is the question of detectability. The fragility exposed here isn’t just a vulnerability; it’s a symptom. A detectable symptom, perhaps, but one that requires moving beyond pixel-level comparisons. The artifacts of semantic divergence are subtle. Finding a signature, a tell, in the generated image that betrays the manipulation – that’s a harder problem, and one worth pursuing, if only to delay the inevitable.

Ultimately, this feels less like a breakthrough in attack methodology and more like a detailed map of the fault lines in the current generation of text-to-image models. It’s a good map. A useful map. But the terrain is shifting, and legacy will become a memory of better times. The bugs, as always, are proof of life.

Original article: https://arxiv.org/pdf/2511.21180.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- All Shadow Armor Locations in Crimson Desert

- All Skyblazer Armor Locations in Crimson Desert

- How to Get the Sunset Reed Armor Set and Hollow Visage Sword in Crimson Desert

- Best Bows in Crimson Desert

- Marni Laser Helm Location & Upgrade in Crimson Desert

- All Helfryn Armor Locations in Crimson Desert

- All Golden Greed Armor Locations in Crimson Desert

- Wings of Iron Walkthrough in Crimson Desert

- How to Craft the Elegant Carmine Armor in Crimson Desert

- Keeping Large AI Models Connected Through Network Chaos

2025-11-29 07:51