Author: Denis Avetisyan

A new analysis rigorously demonstrates the impossibility of building a consistent quantum field theory incorporating virtual particles that travel faster than light.

This work proves that covariant virtual tachyons lead to violations of Lorentz invariance, challenging models relying on superluminal propagation.

The longstanding theoretical challenge of accommodating superluminal particles is complicated by potential violations of fundamental principles like causality and Lorentz invariance. This paper, ‘Is a covariant virtual tachyon viable?’, investigates whether a consistent quantum field theory can be constructed using purely virtual tachyons within the fakeon framework. We demonstrate that such a formulation is impossible, encountering fatal obstructions stemming from non-invariant commutation relations and a fundamental incompatibility between tachyon propagators-ultimately leading to Lorentz invariance violation and challenges to the equivalence principle. Given these findings, can alternative theoretical frameworks successfully incorporate or preclude the existence of superluminal phenomena without compromising the foundations of relativistic quantum field theory?

The Quantum Realm: Beyond Conventional Particles

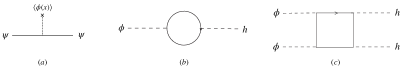

Quantum Field Theory, the framework underpinning much of modern particle physics, doesn’t portray forces as direct interactions, but rather as the exchange of what are termed ‘virtual particles’. These aren’t particles in the conventional sense-they aren’t ‘on-shell’ and cannot be directly detected by any instrument. Instead, they are mathematical constructs, described by a quantity called the propagator, which essentially calculates the probability amplitude for a particle to travel between two points in spacetime. The propagator isn’t simply a calculation shortcut; it’s fundamental to understanding how forces like electromagnetism and the strong nuclear force operate at a quantum level. For instance, the electromagnetic force between two electrons isn’t a direct ‘pull’, but arises from the exchange of virtual photons, mediating the interaction. While fleeting and unobservable, these virtual particles are crucial for calculating measurable quantities and predicting the outcomes of particle collisions, effectively making them essential components of the quantum world, despite their ephemeral existence.

The forces governing interactions between particles, such as the Yukawa interaction responsible for the strong nuclear force, are not simply direct actions but are mediated by ephemeral, unobservable entities known as virtual particles. These particles aren’t detectable in the conventional sense – they flit in and out of existence, violating the energy-time uncertainty principle for brief periods – yet their mathematical necessity within quantum field theory is undeniable. This raises a profound question: are virtual particles merely convenient tools for calculation, or do they possess a degree of physical reality? While lacking the properties of ‘real’ particles – definite mass, lifetime, and trajectory – their influence on measurable phenomena is clear. The purely mathematical nature of these intermediaries challenges physicists to consider the fundamental relationship between mathematical description and physical existence, pushing the boundaries of what it means for something to ‘be’ real within the quantum realm.

The established understanding of virtual particles typically confines them within the bounds of relativistic causality – the principle that no influence can travel faster than light. However, theoretical investigations relaxing this constraint demonstrate potentially profound implications for physics. By considering scenarios where virtual particles momentarily violate causal order, researchers uncover unexpected connections to areas like wormhole physics and even the nature of dark energy. These explorations aren’t proposing observable faster-than-light travel, but rather suggest that the mathematical tools used to describe virtual interactions may hint at deeper, non-local structures within spacetime. This line of inquiry challenges conventional assumptions about the fundamental limits of physical interactions and opens avenues for reconciling quantum field theory with broader cosmological models, potentially revealing a more complete picture of the universe’s underlying architecture.

Tachyonic Fields: A Departure from Conventional Stability

Tachyonic scalar fields are characterized by a mass-squared parameter (m^2) with a negative value. This is in direct contrast to conventional scalar fields which possess positive m^2 values, indicating stability. A negative m^2 introduces an instability into the field’s potential energy, causing the field to decay from any initial configuration. Mathematically, this manifests as a potential unbounded from below, allowing for ‘runaway’ solutions where the field value tends toward infinity in finite time. This behavior violates the requirement that physical systems possess a minimum energy state, and consequently presents a significant challenge to the theoretical consistency of models incorporating tachyonic fields; stabilization mechanisms are therefore crucial for their inclusion in viable physical theories.

Tachyonic fields present a novel extension to the standard model’s concept of virtual particles by potentially describing particles that consistently travel faster than light. In conventional quantum field theory, virtual particles are off-shell and exist for a limited time governed by the Heisenberg uncertainty principle. However, within a tachyonic field framework, the negative mass-squared parameter m^2 allows for interpretations where particles exist as real, though inherently unstable, entities perpetually exceeding the light barrier. This isn’t a violation of causality, as these tachyons would require infinite energy to be slowed to subluminal speeds; instead, they represent a distinct class of particles with fundamentally different propagation characteristics than their slower-than-light counterparts, necessitating a re-evaluation of typical field excitation interpretations.

The study of tachyonic fields necessitates the framework of Covariant Quantum Field Theory (CQFT) to maintain consistency with special relativity. CQFT provides the mathematical tools – specifically, Lorentz-invariant formulations – to analyze fields where the mass-squared parameter is negative m^2 < 0. A rigorous approach is crucial because standard quantization procedures, designed for positive mass-squared fields, can lead to violations of causality and unitarity when directly applied to tachyons. Ensuring Lorentz covariance – the invariance of physical laws under Lorentz transformations – demands careful treatment of the field’s commutation relations and the construction of a Hamiltonian that avoids instabilities and preserves the fundamental principles of relativistic quantum mechanics. This involves a detailed analysis of the energy-momentum tensor and the propagation of tachyonic excitations within the established CQFT formalism.

The Fakeon Prescription: Reinterpreting the Tachyon

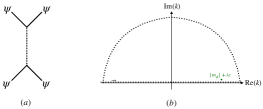

The ‘Fakeon Prescription’ addresses the theoretical challenges posed by purely virtual particles, specifically tachyons, by focusing on the mathematical structure of the Feynman propagator. Traditionally, the propagator describes the amplitude for a particle to travel between spacetime points; the Fakeon Prescription isolates the real part of this propagator D(x-y) = \in t \frac{d^4p}{(2\pi)^4} \frac{e^{-ip \cdot (x-y)}}{p^2 - m^2 + i\epsilon}. By considering only the real component, the method effectively ‘reinterprets’ the tachyon not as a physical particle propagating forward and backward in time, but as a mathematical construct allowing for consistent field theory calculations without requiring a stable vacuum state. This approach bypasses the usual instability problems associated with tachyons by avoiding the need to define a ground state; the theory remains mathematically well-defined even though it does not describe observable, physical particles.

Traditional attempts to construct a quantum field theory involving tachyons encounter instability issues stemming from the unboundedness of the Hamiltonian from below, necessitating the search for a stable vacuum state. The ‘Fakeon Prescription’ circumvents this problem by shifting the focus from physical interpretation and vacuum stability to the mathematical structure of the two-component propagator. By concentrating on the real part of the propagator and treating the tachyon as a purely virtual particle, the theory avoids the need to define a ground state and, consequently, bypasses the instability inherent in attempting to minimize the energy of a tachyon field. This doesn’t resolve the underlying issues with tachyon fields, but instead reinterprets their properties to achieve mathematical consistency without requiring physical stability.

The construction of a consistent quantum field theory utilizing the ‘Fakeon Prescription’ for virtual tachyons results in demonstrable Lorentz violation upon interaction with standard model fields. Specifically, the paper proves that maintaining covariance – the invariance of physical laws under Lorentz transformations – is incompatible with a consistent treatment of these virtual particles. This incompatibility arises because the reinterpretation of the tachyon’s propagator, while avoiding vacuum instability, necessitates modifications to the field’s behavior that break Lorentz symmetry when the virtual tachyon interacts with fields governed by standard relativistic quantum field theory. The resulting theory predicts dispersion relations that deviate from those expected in a Lorentz-invariant spacetime, indicating a dependence on the observer’s reference frame.

Lorentz Violation: A Challenge to Spacetime Symmetry

The theoretical exploration of tachyonic fields-hypothetical particles that always travel faster than light-inevitably confronts the established tenets of special relativity. This foundational physics principle, positing the speed of light as a universal constant and an ultimate speed limit, is directly challenged by the very existence of tachyons. Because these particles, if real, would routinely exceed this limit, their study necessitates a careful consideration of Lorentz violation – a breakdown in the symmetry that underpins special relativity. Examining tachyonic fields isn’t simply about adding a faster particle to the standard model; it compels physicists to explore scenarios where spacetime itself might operate under different rules, potentially requiring modifications to the fundamental laws governing causality and the relationship between space and time. This pursuit opens avenues for investigating alternative physical frameworks and searching for subtle experimental signatures that could reveal the presence of Lorentz-violating effects.

Carrollian physics presents a radical reimagining of spacetime, positing a universe where the speed of light, typically considered a universal constant, is effectively zero. This isn’t simply a reduction in speed, but a fundamentally different structure where time and space roles are reversed compared to the conventional Lorentzian spacetime of special relativity. Exploring this framework allows physicists to investigate scenarios where Lorentz invariance – the principle that the laws of physics are the same for all observers in uniform motion – is broken, potentially offering insights into the behavior of tachyonic fields and other phenomena challenging established physics. By examining the consequences of c = 0, researchers can develop alternative models for particle interactions and probe the limits of our current understanding of causality and the nature of spacetime itself, opening doors to novel theoretical avenues for resolving inconsistencies arising from faster-than-light particles.

Rigorous analysis of tachyon interactions has yielded quantifiable limits on their strength, effectively constraining theoretical models that incorporate these hypothetical particles. Calculations demonstrate that the interaction potential induced by tachyon exchange is limited to a value less than 2 x 10-13, a remarkably small figure derived from observational constraints. Furthermore, the research establishes an upper bound of 107 for the parameter α₀, which governs the amplitude of long-range oscillating potentials generated by these interactions. These bounds, obtained through careful consideration of potential energy fluctuations, provide crucial benchmarks for future investigations into Lorentz violation and the fundamental nature of spacetime, effectively narrowing the parameter space for viable tachyon-based physics.

Beyond the Standard Model: Charting New Theoretical Territories

The Standard Model of particle physics, while remarkably successful, leaves several fundamental questions unanswered, prompting physicists to explore theoretical frameworks that extend beyond its current limitations. Investigations into tachyonic fields – hypothetical particles that always travel faster than light – and potential violations of Lorentz invariance, a cornerstone of special relativity, suggest the possibility of physics operating outside the established rules. These concepts aren’t simply mathematical curiosities; they represent potential cracks in the foundation of our understanding of the universe. A universe permitting tachyons or Lorentz violation would necessitate a revision of established spacetime concepts, potentially offering explanations for phenomena like dark energy and dark matter, and hinting at a deeper, more complex reality than currently described. While experimental evidence remains elusive, the continued exploration of these unconventional ideas represents a crucial path toward a more complete and accurate model of the cosmos.

The cornerstone of modern physics, the Equivalence Principle – which posits that gravitational and inertial mass are one and the same – faces compelling challenges from theoretical explorations of tachyonic fields and potential violations of Lorentz invariance. This principle elegantly explains why all objects fall at the same rate in a gravitational field, but emerging models suggest a subtle distinction between how objects resist acceleration (inertia) and how they respond to gravity. Investigations into these discrepancies aren’t merely academic; they imply that gravity might not be solely a curvature of spacetime as described by Einstein, but could involve additional forces or interactions. Consequently, researchers are revisiting fundamental questions about the nature of mass, energy, and the very fabric of spacetime, seeking to reconcile these new theoretical possibilities with established observations and potentially unveiling a more complete understanding of gravitational phenomena.

Investigations into theoretical concepts like tachyonic fields and violations of Lorentz symmetry are not merely abstract exercises, but potentially pivotal avenues for addressing some of cosmology’s most persistent mysteries. The Standard Model, while remarkably successful, leaves substantial questions unanswered regarding the accelerating expansion of the universe – attributed to the enigmatic dark energy – and the unseen mass influencing galactic rotation, known as dark matter. By challenging established principles about the relationship between gravity and inertia, these explorations offer a pathway toward a more complete understanding of spacetime itself. A deeper probe into these unconventional areas may reveal that dark energy and dark matter aren’t simply undiscovered particles, but rather manifestations of a fundamentally different structure of spacetime, potentially altering ΛCDM models and revolutionizing cosmological theory.

The pursuit of theoretical consistency, as demonstrated by the impossibility of a viable covariant virtual tachyon, reveals the interconnectedness of fundamental principles. This work highlights how even seemingly minor adjustments – in this case, the allowance of virtual tachyons – can unravel the established structure of quantum field theory, demanding a re-evaluation of Lorentz invariance. As Paul Feyerabend observed, “Anything goes.” This isn’t a call for intellectual anarchy, but rather an acknowledgement that rigid adherence to pre-established rules can stifle progress; the exploration of unconventional concepts, even those leading to contradictions, is essential to understanding the limits of current frameworks. The breakdown of consistency in this study underscores that good architecture is invisible until it breaks, and only then is the true cost of decisions visible.

Where Do We Go From Here?

The demonstrated incompatibility of consistent quantum field theory with even virtual tachyons reveals a deeper structural issue than simply forbidding superluminal particles. It suggests that the frameworks attempting to accommodate such objects may be built upon assumptions requiring re-evaluation. The insistence on maintaining relativistic covariance, while seemingly a bedrock principle, may necessitate a more nuanced understanding of its limits, and the consequences of even subtle violations. One cannot simply swap a component without considering the whole machine.

Future investigations should not focus on increasingly elaborate attempts to ‘fix’ tachyon models, but rather on rigorously mapping the parameter space where Lorentz invariance begins to unravel. Calculating the cascading effects of even minuscule violations – how they propagate through Green’s functions and manifest in observable phenomena – will be critical. The search isn’t for a viable tachyon, but for the precise boundaries of a seemingly inviolable principle.

Ultimately, this work serves as a reminder that elegance in theoretical physics often arises from simplicity. Complexity, when introduced without a clear structural justification, tends to generate more problems than it solves. The field now faces the task of dismantling the intricate scaffolding built around these hypothetical particles and rebuilding, with a renewed emphasis on foundational principles and a healthy skepticism toward accommodating the impossible.

Original article: https://arxiv.org/pdf/2602.20474.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- All Skyblazer Armor Locations in Crimson Desert

- How to Get the Sunset Reed Armor Set and Hollow Visage Sword in Crimson Desert

- All Shadow Armor Locations in Crimson Desert

- Marni Laser Helm Location & Upgrade in Crimson Desert

- All Helfryn Armor Locations in Crimson Desert

- All Golden Greed Armor Locations in Crimson Desert

- Best Bows in Crimson Desert

- All Icewing Armor Locations in Crimson Desert

- How to Beat Stonewalker Antiquum at the Gate of Truth in Crimson Desert

- Legendary White Lion Necklace Location in Crimson Desert

2026-02-25 20:32