Fixing Build Failures Automatically: A New Approach for Embedded Software

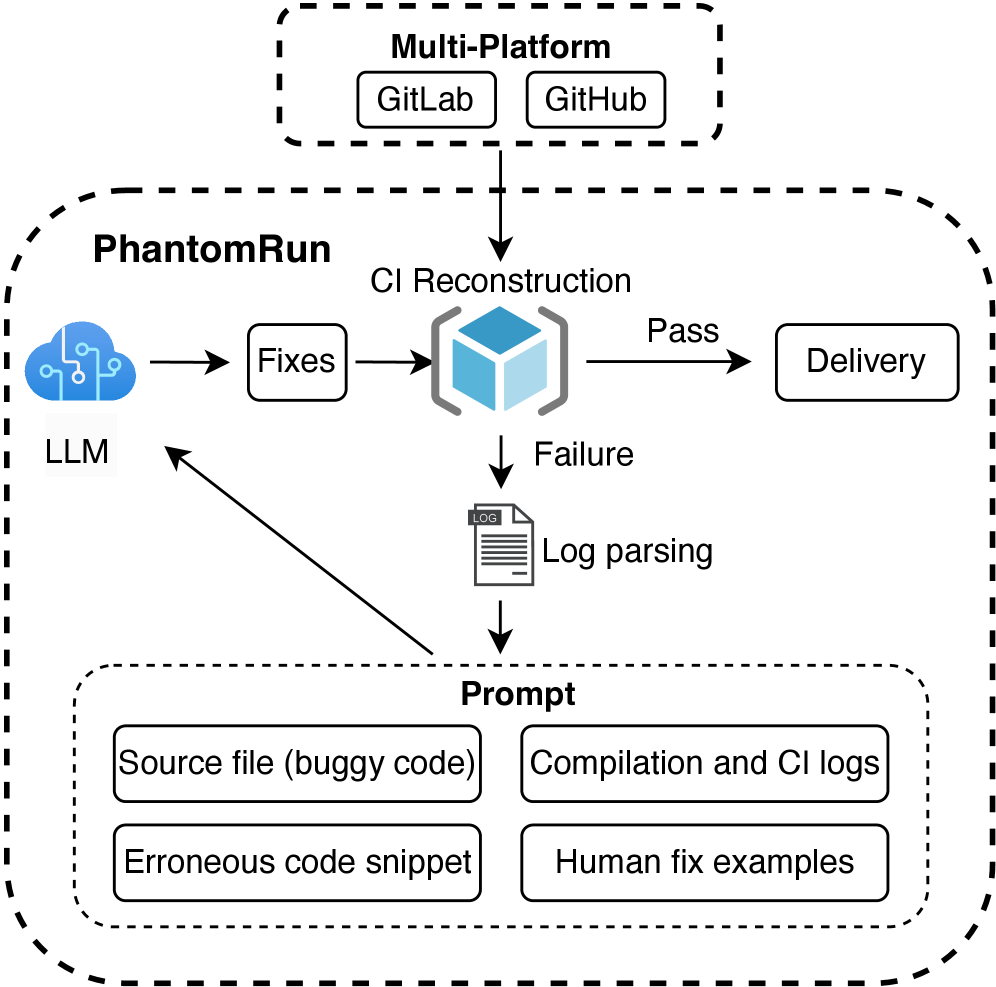

Researchers have developed a system that uses the power of artificial intelligence to automatically repair compilation errors in the complex world of embedded system development.

![The study explores interactions involving a virtual tachyon φ, detailing how its exchange with fermions generates a long-range oscillating potential that disrupts Lorentz invariance, its capacity to mix with the Higgs boson, and the resulting mixed quartic interaction [latex]\phi^{2}h^{2}[/latex].](https://arxiv.org/html/2602.20474v1/x2.png)

![Spatial-keyword queries, encompassing both range and [latex]22NN[/latex] approaches, demonstrate versatile application across diverse scenarios, highlighting the adaptability of these methods in information retrieval systems.](https://arxiv.org/html/2602.20952v1/x1.png)