Author: Denis Avetisyan

New research demonstrates the potential of quantum amplitude estimation to significantly improve the accuracy and efficiency of tail-risk pricing for catastrophe insurance.

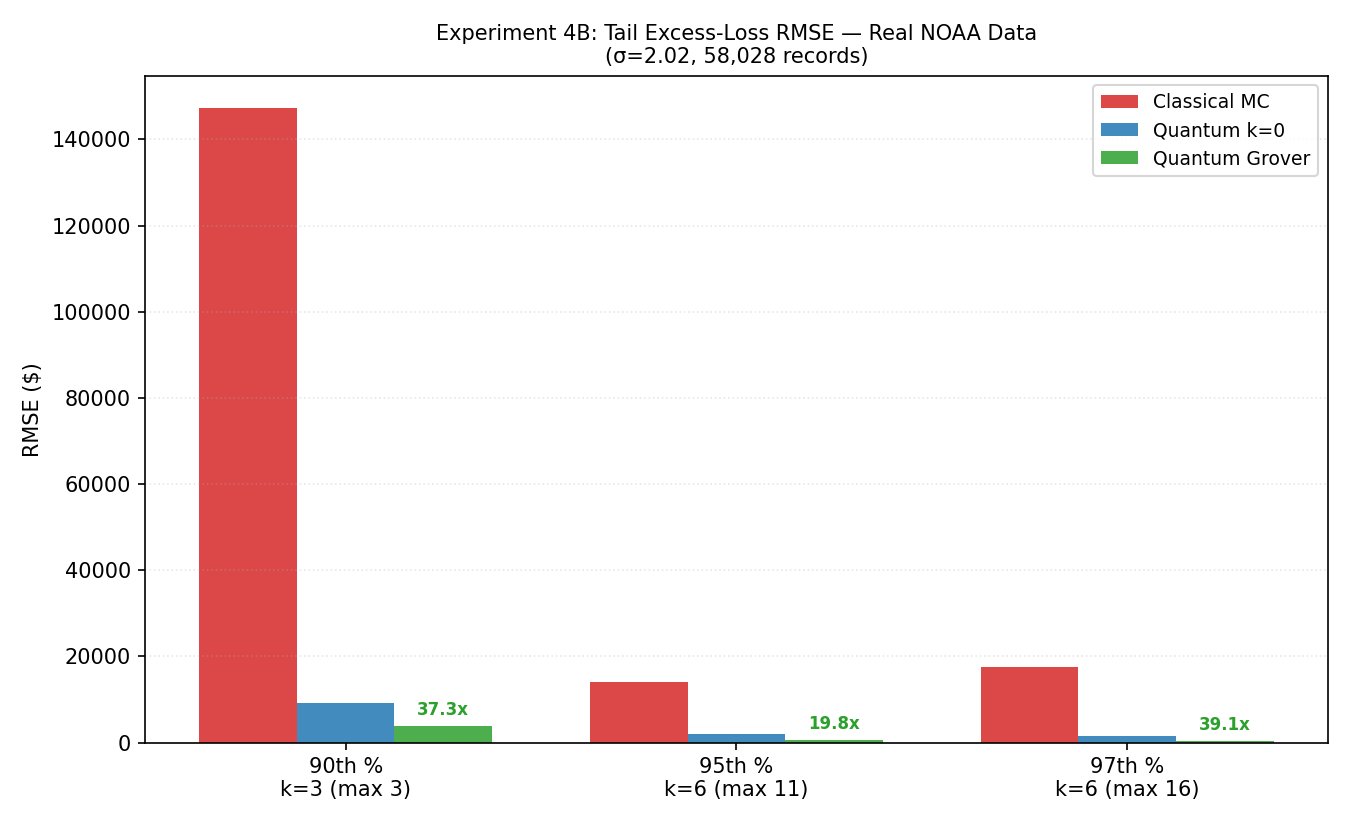

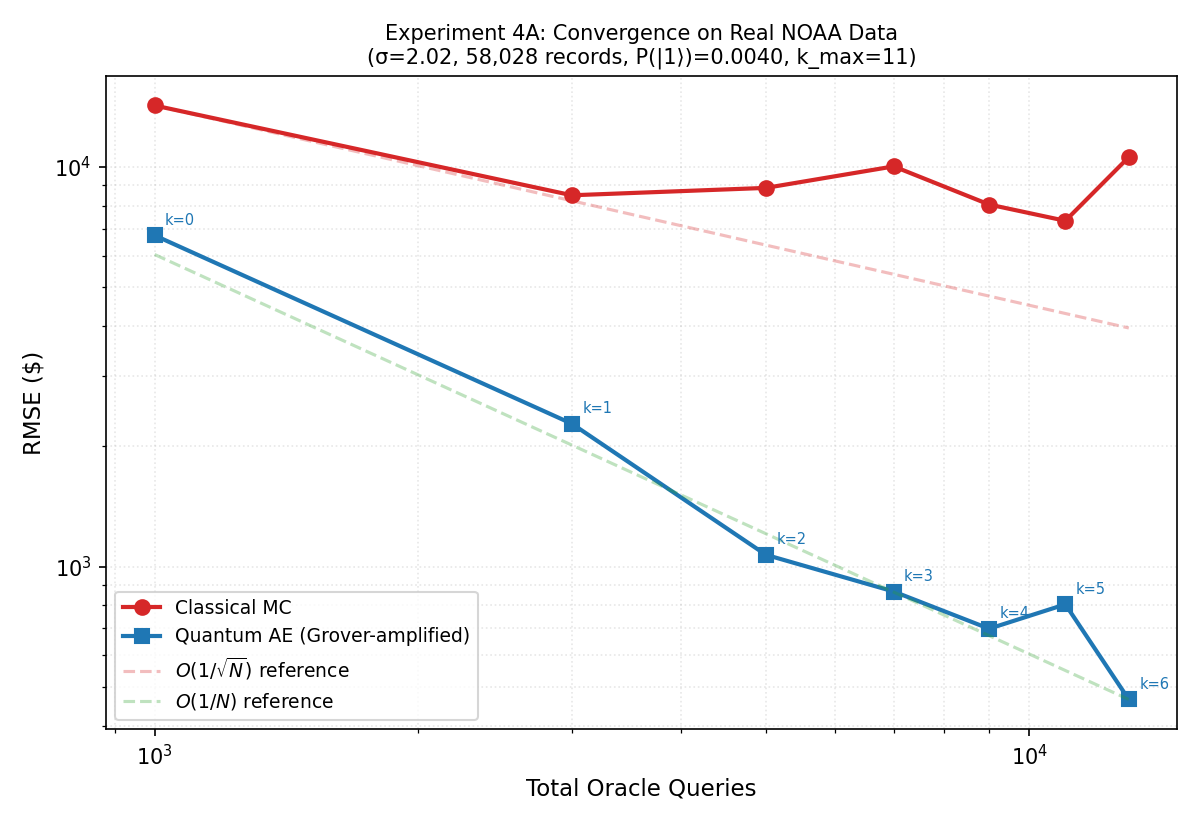

Quantum amplitude estimation offers a 2-3.7x reduction in estimation error compared to Monte Carlo simulation for catastrophe insurance, though realizing this benefit requires further advancements in quantum hardware and discretization techniques.

Accurate pricing of extreme risks in catastrophe insurance is hampered by the computational cost of resolving rare tail events with classical Monte Carlo methods. This limitation motivates the investigation presented in ‘Quantum Amplitude Estimation for Catastrophe Insurance Tail-Risk Pricing: Empirical Convergence and NISQ Noise Analysis’, which explores the potential of quantum computation to accelerate tail risk estimation. Empirical results demonstrate a 2-3.7x reduction in estimation error using quantum amplitude estimation, albeit with current limitations stemming from discretization errors and noisy intermediate-scale quantum (NISQ) hardware. Will advances in fault-tolerant quantum computing and efficient discretization schemes unlock the full potential of quantum algorithms for improved catastrophe risk management?

The Illusion of Prediction in Catastrophe Modeling

The very foundation of catastrophe insurance rests upon the precise valuation of events that, by their nature, defy easy prediction. Insurers must quantify the potential impact of occurrences – such as a magnitude 9.0 earthquake or a Category 6 hurricane – that have limited historical precedent, making statistical analysis particularly challenging. These ‘extreme’ events, while infrequent, carry the potential for catastrophic financial losses, necessitating models capable of extending beyond observed data. Consequently, estimating the probability and severity of these low-probability, high-impact risks demands sophisticated techniques, often pushing the boundaries of actuarial science and computational power, as reliance on past occurrences alone proves insufficient for safeguarding against truly unprecedented disasters.

Conventional catastrophe risk modeling often falters when assessing ‘tail risk’ – the probability of exceptionally severe events like major hurricanes or earthquakes. These models frequently rely on historical data and statistical assumptions that struggle to accurately capture the infrequency and potential magnitude of these extreme occurrences. Consequently, insurers may underestimate the true extent of their potential liabilities, particularly concerning events that exceed the range of observed history. This underestimation arises because standard statistical techniques tend to smooth out extreme values, failing to adequately represent the long return periods associated with catastrophic losses. The resulting financial exposure can be substantial, potentially leading to solvency issues for insurers following a particularly impactful event and highlighting the limitations of relying solely on past data to predict future extreme risk.

Accurately assessing catastrophe risk necessitates increasingly sophisticated computational modeling, yet this very complexity presents a significant bottleneck in risk assessment. Simulating events like hurricanes or earthquakes demands consideration of numerous interacting variables – atmospheric conditions, geological fault lines, building vulnerabilities, population density – each requiring intensive processing power. Current models often rely on Monte Carlo simulations, generating thousands of possible scenarios to estimate probabilities, a process that is both time-consuming and resource-intensive. Furthermore, incorporating high-resolution data – detailed topography, granular building characteristics – amplifies these computational demands. The need for faster, more efficient algorithms and access to high-performance computing infrastructure is therefore paramount, as delays in risk assessment can translate to inadequate preparedness and substantial financial exposure for insurers and policymakers alike.

The Persistence of Classical Approaches

Monte Carlo simulation is the predominant method for modeling catastrophe risks within the insurance industry. This approach functions by generating numerous random scenarios – typically tens of thousands or more – representing potential hazard events and their associated losses. Each simulation estimates a possible outcome based on defined probability distributions for relevant variables such as hazard intensity, vulnerability, and asset value. By aggregating the results across all simulations, insurers can derive probabilistic estimates of potential losses, including exceedance probabilities and value at risk VaR. The flexibility of Monte Carlo stems from its ability to accommodate complex models, non-linear relationships, and a wide range of risk factors, making it suitable for diverse perils including hurricanes, earthquakes, and floods. This makes it a versatile tool for capital allocation, reinsurance purchasing, and overall risk management decisions.

Achieving statistically significant accuracy with Monte Carlo simulations in catastrophe risk modeling is directly correlated with the number of iterations performed. The inherent stochastic nature of the method necessitates a large sample size – often in the millions or billions – to reliably estimate tail probabilities and expected losses, particularly for rare events. Each simulation requires evaluating the model across all insured risks, incorporating numerous parameters and dependencies, resulting in substantial computational demands. This translates to significant processing time and infrastructure costs, often requiring high-performance computing clusters and specialized software to manage the workload and deliver results within acceptable timeframes. The computational burden increases proportionally with model complexity and the desired level of accuracy.

Variance reduction techniques aim to decrease the computational effort required to achieve a specified level of accuracy in Monte Carlo simulations. Importance Sampling alters the sampling distribution to focus on areas of high impact, while Conditional Tail Sampling specifically targets the tails of the loss distribution, where extreme events reside. However, the effectiveness of these methods is heavily dependent on the specific characteristics of the modeled risk; a technique well-suited for one catastrophe model may perform poorly on another. Successful implementation necessitates careful tuning of parameters, often requiring significant expertise and iterative refinement to optimize performance and avoid introducing bias into the results.

A Glimmer of Acceleration: Quantum Amplitude Estimation

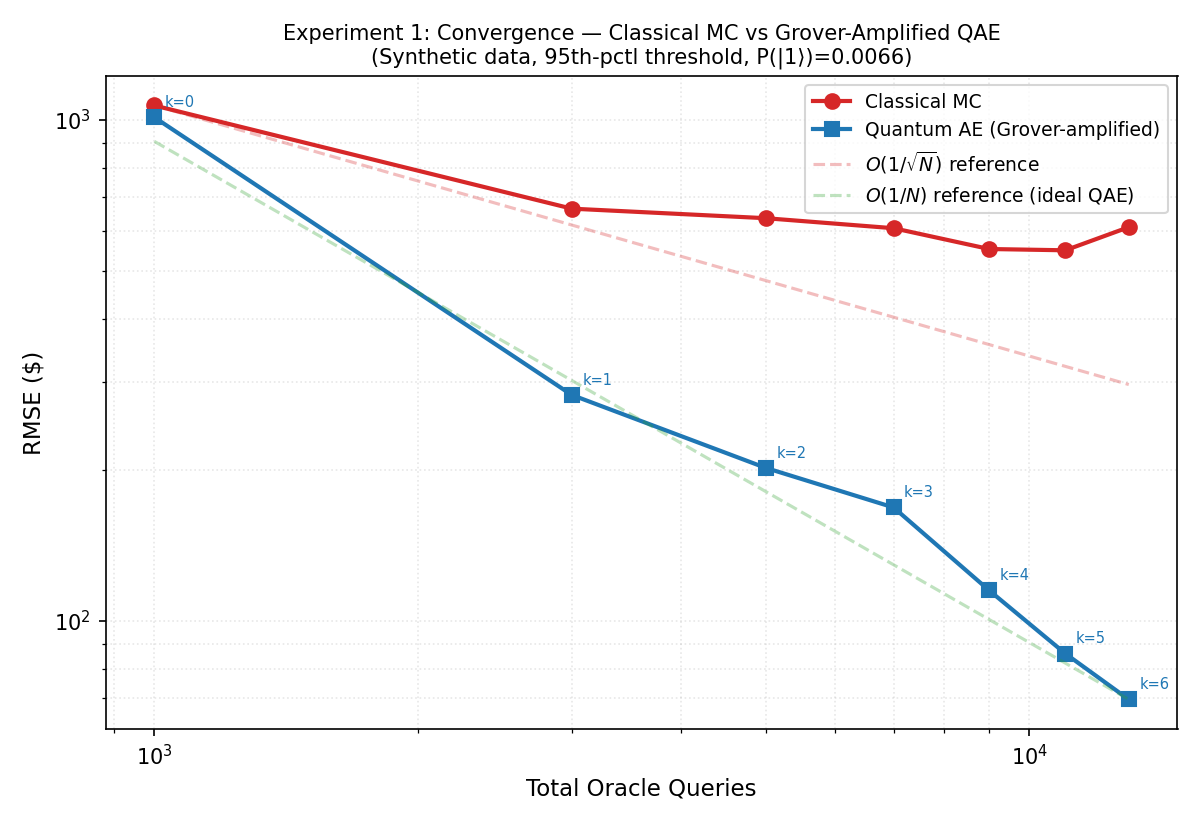

Quantum Amplitude Estimation (QAE) is a quantum algorithm designed to estimate the expected value of a function with a defined range, and it achieves a theoretical speedup over classical Monte Carlo methods. Classical Monte Carlo estimation requires a number of samples proportional to 1/\epsilon^2 to achieve an error of ε. In contrast, QAE can achieve the same error with a number of quantum queries scaling as 1/\sqrt{\epsilon}, representing a quadratic speedup. This reduction in required samples translates to a potentially significant computational advantage for problems requiring high precision estimates, particularly in areas like risk analysis and optimization where classical simulations are computationally expensive.

The study quantified the performance of Quantum Amplitude Estimation (QAE) in catastrophe insurance tail-risk pricing through a comparison of Root Mean Squared Error (RMSE) with classical Monte Carlo simulations. Results indicate a reduction in estimation RMSE ranging from 2.2 to 3.7 times when utilizing QAE. This improvement demonstrates the potential for more precise risk assessment in scenarios requiring the evaluation of extreme events, as commonly found in insurance modeling. The observed RMSE reduction was achieved using specific parameter settings and problem formulations relevant to catastrophe risk.

Quantum Amplitude Estimation (QAE) leverages Grover Amplification to achieve accelerated convergence in estimating the probability of a specific outcome. Grover’s algorithm, and thus QAE, operates by creating a superposition of all possible states, then iteratively amplifying the amplitude of the state(s) corresponding to the desired outcome. This amplification process, governed by a specifically designed ‘oracle’, increases the probability of measuring the target state(s) after a series of controlled operations. The number of iterations required for sufficient amplification, and therefore the accuracy of the estimation, scales proportionally to the square root of the number of states, resulting in a quadratic speedup compared to classical Monte Carlo methods which require a number of samples proportional to the number of states to achieve comparable accuracy. This enables more efficient risk assessment and expectation value estimation with reduced computational resources.

Successful implementation of Quantum Amplitude Estimation (QAE) is heavily dependent on two primary factors: the design of the underlying ‘oracle’ and the efficiency of initial state preparation. The oracle, a quantum subroutine, must accurately reflect the function being estimated; its complexity directly impacts the overall QAE circuit depth and resource requirements. Equally critical is the preparation of an initial quantum state that allows for efficient amplitude amplification; states closer to the target solution minimize the number of Grover iterations needed for convergence. Inefficient oracle construction or suboptimal initial state preparation can negate the theoretical speedup offered by QAE, leading to performance comparable to, or worse than, classical Monte Carlo methods.

The Illusion of Continuous Data

Quantum algorithms, by their nature, process information encoded in discrete quantum states, or qubits. This presents a challenge when applying them to problems originating in the continuous domain, such as catastrophe modeling. These models frequently utilize continuous probability distributions, like the lognormal distribution, to represent the likelihood of various event magnitudes. To interface with quantum hardware, these continuous distributions must be approximated through a process called discretization, where the probability space is divided into a finite number of discrete bins. Each bin represents a range of values, and the probability associated with that range is assigned to the bin. This approximation inherently introduces error, as the continuous function is replaced by a piecewise constant function, and the accuracy of the quantum computation is directly linked to the fidelity of this discretization process.

Discretization, the process of converting continuous probability distributions into a finite set of discrete states required for quantum algorithm execution, inherently introduces approximation errors. In Quantum Amplitude Estimation (QAE) applications, such as catastrophe modeling employing distributions like the lognormal, these errors directly impact the accuracy of risk estimates. Minimizing discretization error is therefore paramount; even small inaccuracies can propagate through the QAE calculation, leading to significantly skewed results and potentially incorrect financial or risk-based decisions. Careful consideration must be given to the discretization method and the number of discrete states utilized to ensure that the resulting error remains within acceptable bounds for the specific application.

Research demonstrated a ten-fold reduction in discretization error when employing log-spaced binning compared to traditional methods. This improvement was particularly pronounced for quantum systems utilizing four or more qubits (n≥4). The reduction in error directly impacts the accuracy of risk estimates derived from quantum amplitude estimation (QAE), as discretization is a necessary step when representing continuous probability distributions for quantum hardware. This suggests that log-spaced binning provides a significantly more efficient method for representing these distributions with a reduced computational burden and improved fidelity of results.

Log-spaced binning represents a discretization technique that improves upon traditional uniform binning methods when approximating continuous probability distributions for quantum applications. Unlike uniform binning, which utilizes equally sized intervals, log-spaced binning employs intervals that increase exponentially. This approach concentrates resolution in regions of higher probability density, thereby reducing discretization error. Specifically, this study demonstrates that log-spaced binning achieves a ten-fold reduction in discretization error compared to uniform binning, particularly when utilizing four or more qubits (n \geq 4) , resulting in more accurate risk estimations within catastrophe modeling and other quantum applications reliant on precise probability representation.

A Foundation for Future Resilience

Quantum computation relies on the principles of quantum mechanics, demanding a fundamentally different approach to calculation than classical computing. Central to this is reversible arithmetic, a system where computations cannot lose information; every operation must be invertible. This necessity stems from the quantum mechanical principle that measurement collapses the quantum state, effectively erasing information. Unlike classical bits, qubits can exist in a superposition of states, and this delicate state must be preserved throughout a calculation. Reversible logic gates, therefore, are designed to avoid information loss, ensuring that the initial quantum state can, in theory, be fully reconstructed from the final state. This preservation of quantum information is not merely a technical requirement, but a core tenet of quantum computation, enabling the potential for exponentially faster algorithms and more complex problem-solving.

Catastrophe risk modeling often relies on computationally intensive simulations to estimate the probability and impact of extreme events. Recent research indicates a promising path toward acceleration by integrating efficient discretization strategies – techniques that simplify complex problems into manageable steps – with quantum algorithms like Quantum Amplitude Estimation (QAE). This combination allows for a potentially significant reduction in the computational resources needed to assess risk. By cleverly representing continuous risk factors with discrete values, and then leveraging QAE’s ability to speed up the estimation of probabilities, simulations that previously took hours or days could be dramatically shortened. The approach unlocks new possibilities for more granular and frequent risk assessments, ultimately enabling insurance companies and policymakers to better prepare for, and mitigate the effects of, catastrophic events.

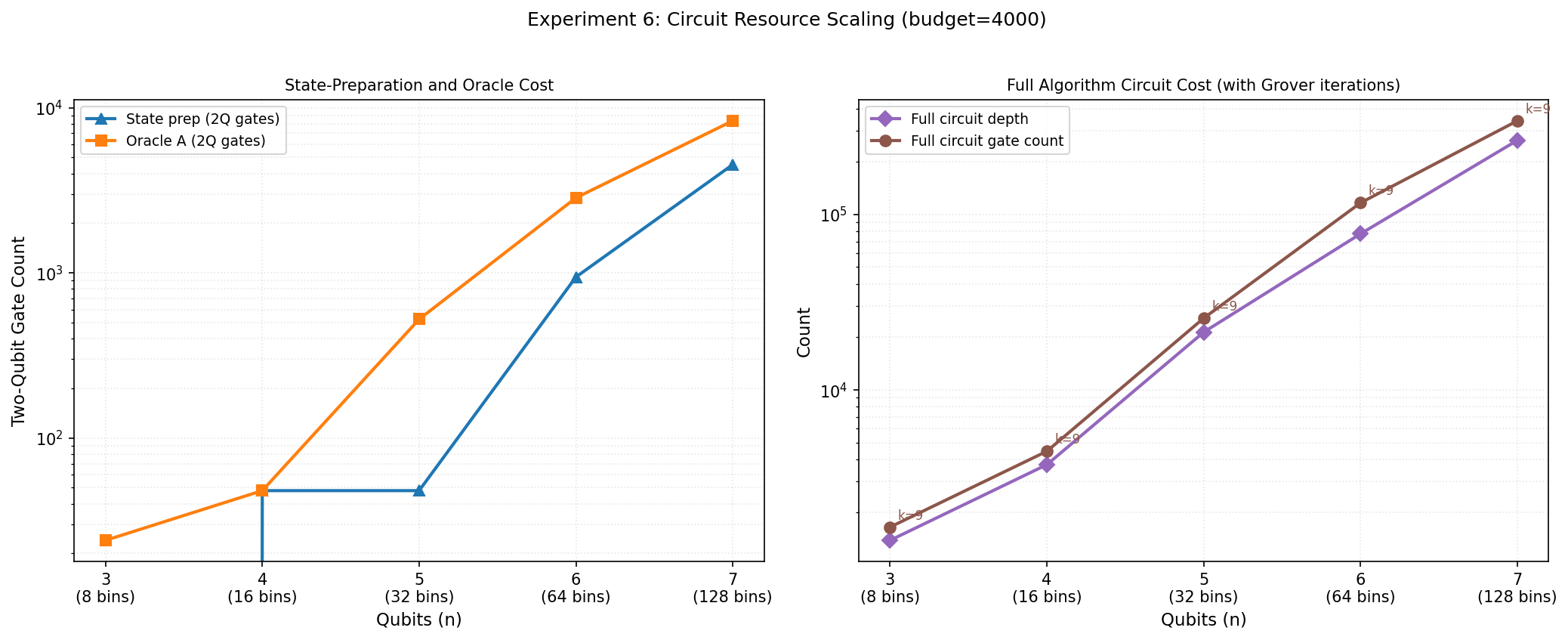

The successful execution of Quantum Amplitude Estimation (QAE) on a quantum circuit comprising 8 qubits represents a significant milestone in the pursuit of quantum solutions for complex financial modeling. This achievement, while currently limited by the constraints of available quantum hardware, highlights the inherent scalability of the QAE algorithm and its potential to outperform classical Monte Carlo methods. The ability to reliably perform calculations with this number of qubits demonstrates the feasibility of encoding and processing increasingly intricate risk scenarios within a quantum framework. Though challenges remain in expanding qubit counts and maintaining coherence, this result provides compelling evidence that quantum computing could fundamentally transform catastrophe risk assessment by enabling substantially faster and more accurate calculations of extreme events.

The research successfully achieved an oracle depth of 21,150 while operating on a quantum system utilizing seven qubits. This represents a significant milestone in scaling quantum algorithms for complex computational tasks, specifically within catastrophe risk modeling. Oracle depth, a measure of the complexity of a quantum algorithm, directly impacts its ability to tackle increasingly intricate problems; a higher depth allows for the evaluation of more detailed scenarios. Reaching this level with seven qubits demonstrates the potential for quantum algorithms to surpass the limitations of classical methods when applied to high-dimensional risk assessments, paving the way for more nuanced and accurate catastrophe modeling despite the current constraints in qubit availability and coherence.

Despite current limitations in building and scaling quantum hardware, the application of quantum computing to catastrophe insurance promises a significant leap in risk assessment capabilities. Traditional methods struggle with the complexity of modeling correlated extreme events, often relying on computationally expensive Monte Carlo simulations. Quantum algorithms, such as Quantum Amplitude Estimation (QAE), offer the theoretical potential to accelerate these calculations, enabling more granular and accurate estimations of tail risk – the probability of rare, high-impact events. This improved modeling could lead to better reinsurance pricing, more effective capital allocation for insurers, and ultimately, a more resilient financial system against increasingly frequent and severe natural disasters. While fully realizing this potential requires overcoming substantial engineering hurdles, ongoing research suggests that quantum computing could fundamentally transform how catastrophe risk is understood and managed.

The pursuit of ever-more-precise catastrophe modeling, as demonstrated by the application of quantum amplitude estimation, reveals a fundamental truth: systems designed for absolute accuracy are ultimately brittle. This work, achieving a 2-3.7x reduction in estimation error, isn’t a destination, but a step further along a path inevitably marked by discretization error and NISQ noise. As Jean-Jacques Rousseau observed, “The more we are accustomed to order, the more difficult it is to endure disorder.” The very refinement sought in tail risk pricing creates a more sensitive system, amplifying the impact of inevitable imperfections. A system that never breaks is, indeed, a dead one; the value lies not in eliminating risk, but in understanding and adapting to it.

The Horizon Beckons

The pursuit of speed in risk assessment, as demonstrated by this work, invariably reveals the sluggishness of the foundations. A two to three-fold reduction in estimation error is not a victory over uncertainty, but merely a clearer view of its shape. Each algorithmic refinement, each quantum acceleration, serves only to expose the limits of discretization-the necessary falsehoods upon which all modeling rests. The problem isn’t simply to calculate faster, but to acknowledge that every calculation is, at its heart, a controlled hallucination.

The promise of quantum amplitude estimation lies not in a perfected price, but in a more efficient exploration of the impossible space of catastrophic events. Practical realization demands not just better qubits, but a reimagining of how risk is even defined. The architecture will shift, inevitably, from elegant algorithms to sprawling systems of error mitigation and data calibration-a prophecy of operational complexity fulfilled.

The field will not converge on a solution, but radiate outward toward a multitude of specialized approximations. Order is, after all, just a temporary cache between failures. The true horizon isn’t fault-tolerance, but the acceptance that even the most robust model is a transient illusion in the face of genuine chaos.

Original article: https://arxiv.org/pdf/2603.15664.pdf

Contact the author: https://www.linkedin.com/in/avetisyan/

See also:

- Re:Zero Season 4, Episode 6 Release Date & Time

- NTE Drift Guide (& Best Car Mods for Drifting)

- How to Get the Wunderbarrage in Totenreich (BO7 Zombies)

- How to Beat Turbines in ARC Raiders

- How to Get Necrolei Cyst & Strong Acid in Subnautica 2

- All Aswang Evidence & Weaknesses in Phasmophobia

- Alan Wake Event in Phasmophobia, Explained

- Diablo 4 Best Loot Filter Codes

- How to Craft Repair Tools in Subnautica 2

- Best Where Winds Meet Character Customization Codes

2026-03-18 16:31